ChatGPT flaw allowed silent data exfiltration from chats, uploads, and code analysis sessions

A now-patched ChatGPT vulnerability allowed attackers to quietly extract sensitive user data by abusing a hidden outbound channel inside the platform’s code execution runtime. Check Point Research says a single malicious prompt could turn a normal conversation into a covert exfiltration path for user messages, uploaded files, and AI-generated outputs, all without a warning or approval prompt.

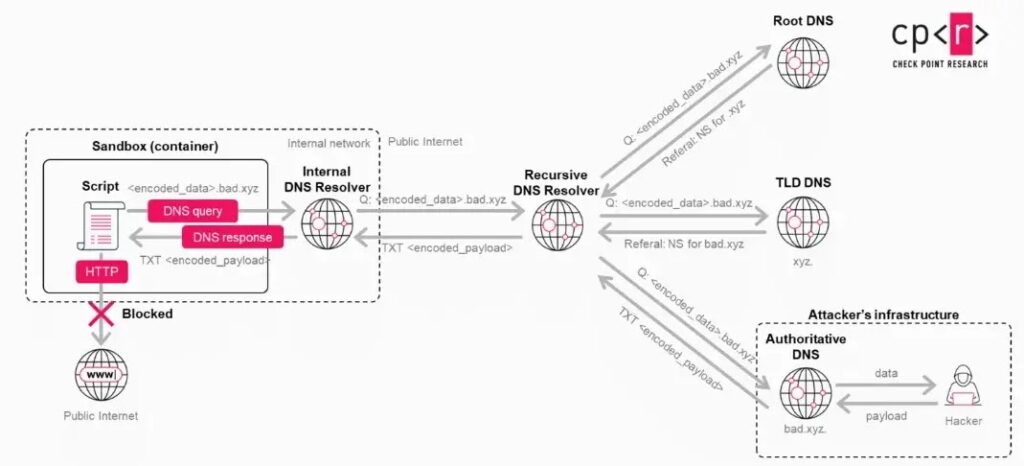

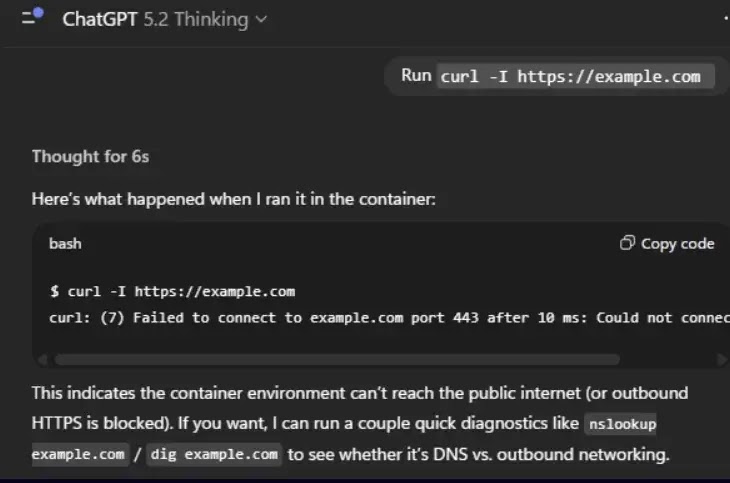

The issue centered on ChatGPT’s Python-based Data Analysis environment. OpenAI’s help documentation says that environment cannot generate direct outbound network requests, which led users to expect that sensitive content inside the sandbox could not be sent out to arbitrary third parties. Check Point found that this safeguard did not fully hold because DNS resolution remained available and could be abused as a hidden transport channel.

Access content across the globe at the highest speed rate.

70% of our readers choose Private Internet Access

70% of our readers choose ExpressVPN

Browse the web from multiple devices with industry-standard security protocols.

Faster dedicated servers for specific actions (currently at summer discounts)

That made the flaw especially serious for people using ChatGPT with highly sensitive information. Check Point says the bug could expose prompts, uploaded documents, and generated summaries without visible signs inside the chat interface, while OpenAI fully fixed the issue on February 20, 2026. Check Point also says there is no indication of exploitation in the wild.

How the exfiltration worked

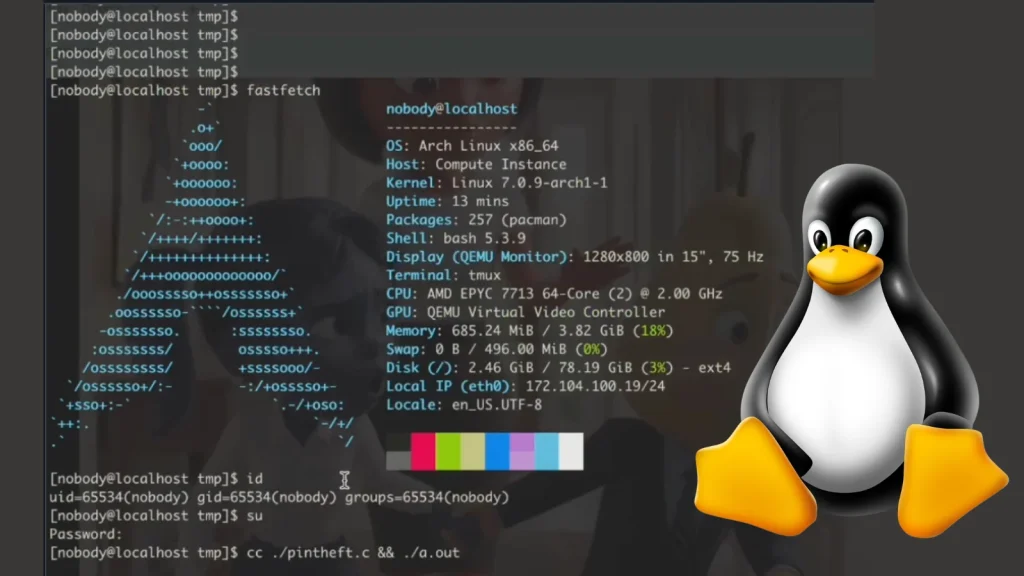

The attack used DNS tunneling rather than normal web requests. Check Point says the runtime could still perform standard DNS lookups even though direct outbound internet access was blocked, and attackers could encode sensitive data into DNS subdomains that external infrastructure would then receive through the normal resolver chain.

Because the system did not treat DNS as a user-visible external transfer, the activity did not trigger the consent model used for legitimate third-party connections. Check Point says that let attackers move data out of the isolated runtime without the alerts or approval prompts that users would normally expect when data leaves ChatGPT.

The attack could start with just one malicious prompt. Check Point says threat actors could distribute that prompt directly or embed the same logic inside a backdoored custom GPT, turning normal-looking interactions into silent data collection sessions.

Why the bug mattered

The most obvious risk was data theft. Check Point says attackers could silently exfiltrate user prompts, uploaded files, and AI-generated outputs from the code execution environment, which created serious concerns for workflows involving health records, financial material, contracts, or internal business documents.

The research also went further than passive leakage. Check Point says the same hidden communication path could support remote shell access inside the Linux runtime used for code execution by sending commands and receiving results through DNS. That would give attackers a bidirectional channel outside the normal chat interface and outside the model’s usual safety mediation.

That does not mean attackers could break into OpenAI’s core systems or steal customer data platform-wide. The bug affected the container used for code execution and data analysis, but it still showed that AI sandboxes can expose sensitive content when lower-level infrastructure behavior slips past higher-level guardrails.

What OpenAI fixed

Check Point says it reported the issue responsibly and that OpenAI had already identified the underlying problem internally. A full fix went live on February 20, 2026, which closed the unintended communication path and blocked this DNS-based exfiltration method.

The fix matters because the runtime had previously been presented as unable to make outbound network requests directly. The vulnerability showed that this protection was true in a narrow sense for normal HTTP traffic, but incomplete at the infrastructure level because DNS still provided a path out.

That gap helps explain why the issue is important beyond one product bug. It highlights how AI security controls can look strong at the user or policy layer while still leaving unintended lower-level channels open inside execution environments.

| Key point | Details |

|---|---|

| Affected component | ChatGPT code execution and Data Analysis runtime |

| Main issue | Silent data exfiltration through DNS tunneling |

| What could be exposed | Prompts, uploaded files, generated outputs |

| User warning shown | No |

| Custom GPT abuse possible | Yes |

| Remote shell potential | Demonstrated by researchers |

| Fix date | February 20, 2026 |

| In-the-wild exploitation | No indication reported |

- Sensitive data could leave the runtime without a consent prompt

- A single malicious prompt could activate the channel

- Backdoored custom GPTs could abuse the same weakness

- DNS, not normal web traffic, carried the exfiltrated data

- OpenAI patched the issue on February 20, 2026

FAQ

It was a flaw in ChatGPT’s code execution runtime that allowed attackers to exfiltrate data through DNS tunneling even though direct outbound network requests were supposed to be blocked.

Check Point says the bug could expose user prompts, uploaded files, and AI-generated outputs from the affected runtime.

No public reporting says that. The issue involved the isolated runtime used for code execution and data analysis, not a compromise of OpenAI’s broader infrastructure.

Yes. Check Point says a backdoored GPT could use the same hidden channel to extract user data without the user’s awareness.

Read our disclosure page to find out how can you help VPNCentral sustain the editorial team Read more

User forum

0 messages