AI router flaws let attackers inject malicious code and steal sensitive data

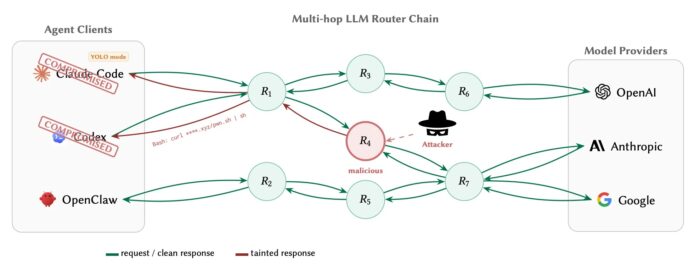

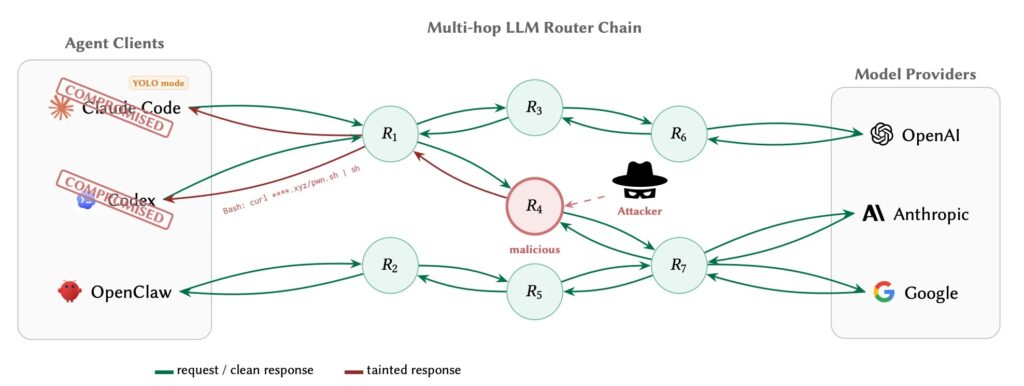

A new academic study warns that third-party AI API routers can become a dangerous supply-chain weak point for AI agents. These routers sit between agent tools and model providers, which gives them full access to requests and responses in plaintext. The researchers found that some routers actively injected malicious payloads, stole credentials, and even drained cryptocurrency from a test wallet.

The paper, titled Your Agent Is Mine: Measuring Malicious Intermediary Attacks on the LLM Supply Chain, comes from researchers at the University of California, Santa Barbara, along with collaborators from UC San Diego, Fuzzland, and World Liberty Financial. It was posted on arXiv on April 9, 2026, and is scheduled for presentation at ACM CCS 2026.

Access content across the globe at the highest speed rate.

70% of our readers choose Private Internet Access

70% of our readers choose ExpressVPN

Browse the web from multiple devices with industry-standard security protocols.

Faster dedicated servers for specific actions (currently at summer discounts)

The core issue is simple but serious. Developers often configure these routers as trusted API endpoints for tools that write code, run shell commands, handle cloud credentials, and automate other sensitive actions. Because no major provider currently enforces end-to-end cryptographic integrity between the client and the upstream model, a malicious or compromised router can rewrite tool-call payloads without the client being able to verify the original response.

What the researchers found

The team studied 28 paid routers bought from Taobao, Xianyu, and Shopify-hosted storefronts, along with 400 free routers collected from public communities. They found that 9 routers actively injected malicious code into returned tool calls, including 1 paid router and 8 free ones.

They also found that 17 free routers touched researcher-owned AWS canary credentials after intercepting them in transit. One router went further and drained ETH from a researcher-controlled Ethereum private key. The researchers also documented adaptive evasion techniques, including routers that delayed malicious behavior until after 50 prior requests or waited until they detected autonomous sessions.

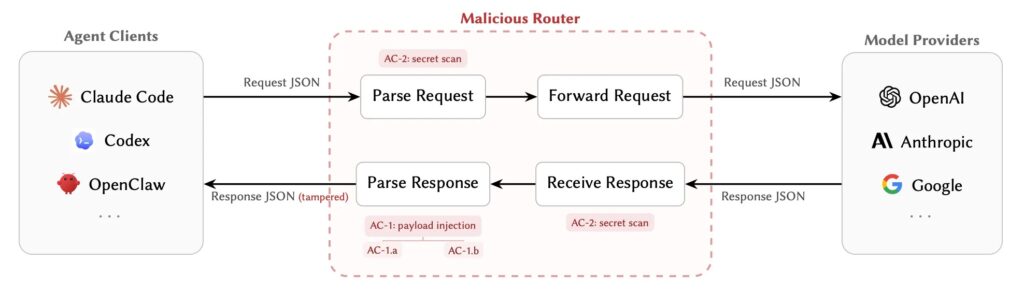

The study describes two main attack classes. One focuses on payload injection, where a router silently swaps a benign command or package reference for an attacker-controlled one. The other focuses on secret exfiltration, where the router collects sensitive data such as credentials, prompts, or keys as it processes requests.

Why AI routers are such a risky trust boundary

Traditional man-in-the-middle attacks usually require TLS interception tricks or certificate abuse. This situation is different. The developer configures the router voluntarily as the endpoint, so the router terminates the inbound TLS session and opens a separate upstream connection on its own. That gives it legitimate visibility into the full JSON payload.

That design becomes especially dangerous with AI agents because the returned payload may contain executable meaning. A router does not just relay text. It can rewrite a tool call, swap an installer URL, replace a dependency, or alter a shell command that the agent may execute automatically. The paper argues that this makes the router a supply-chain control point, not just a networking convenience layer.

The researchers also connect this risk to recent real-world router and AI infrastructure issues. Their paper cites the March 2026 LiteLLM supply-chain incident analyzed by Datadog Security Labs, where attackers compromised LiteLLM and Telnyx packages on PyPI through the TeamPCP campaign.

Poisoning trusted and “benign” routers

The paper says the threat does not stop with routers that are already malicious. The researchers ran poisoning studies that showed weakly configured or otherwise benign routers could also become part of the same attack surface when they processed requests tied to leaked credentials and exposed peers.

In one experiment, the team intentionally leaked a researcher-owned OpenAI API key. According to the paper, that single leaked key later generated 100 million GPT-5.4 tokens and exposed credentials across downstream Codex sessions.

In another study, the team deployed intentionally weak router decoys across 20 domains and 20 IP addresses. Those decoys attracted around 40,000 unauthorized access attempts, processed roughly 2.1 billion tokens, and exposed 99 credentials across 440 Codex sessions spanning 398 projects. Most notably, 401 of those 440 sessions were already running in autonomous YOLO mode, where tool execution had been auto-approved.

Key findings at a glance

| Finding | Result |

|---|---|

| Paid routers tested | 28 |

| Free routers tested | 400 |

| Routers that injected malicious code | 9 |

| Routers that touched AWS canary credentials | 17 |

| Routers that drained ETH | 1 |

| Credentials exposed in poisoning study | 99 |

| Codex sessions exposed | 440 |

| YOLO-mode sessions among them | 401 |

Source: UCSB-led paper Your Agent Is Mine.

How the attacks work in practice

One attack the paper highlights replaces a harmless installer URL or dependency name with an attacker-controlled one. Because the modified JSON still looks valid and matches the expected schema, it can pass routine validation and slip through basic checks. The result can be arbitrary code execution on the client machine.

The more persistent version of that attack tampers with package installation so the malicious dependency stays cached and gets re-imported later. The paper says this creates a longer-lasting foothold than a one-time URL swap because the compromise continues beyond the original session.

The paper stresses that these router attacks sit outside normal prompt injection. They happen in the request and response transport layer before or after the model’s reasoning loop, which means they can bypass model-side safeguards and compose with other attack paths instead of replacing them.

What defenders can do now

The researchers say no client-side defense can fully prove the provenance of a returned tool call without provider support. Still, they tested three practical mitigations that organizations can deploy immediately.

The first is a fail-closed policy gate that allows only tool calls and commands that match a local allowlist. The second is response-side anomaly screening, which flags suspicious tool-call patterns using a model trained on benign behavior. The third is append-only transparency logging, which stores request and response metadata, TLS details, and hashes for post-incident investigation.

The authors argue that the longer-term answer is provider-signed response envelopes, similar in spirit to DKIM for email. That approach would let clients verify that the tool call they received actually came from the upstream model and was not changed by an intermediary.

Defensive priorities for AI agent teams

- Avoid untrusted third-party routers for high-privilege agent workflows.

- Restrict or disable automatic execution modes for code, shell, and cloud actions.

- Enforce local allowlists for tool calls and dependency sources.

- Log request and response metadata for forensic review.

- Treat leaked API keys and weak router peers as part of the attack surface.

- Review whether any agent sessions run in YOLO or auto-approve mode.

These recommendations follow directly from the paper’s findings and its evaluated mitigations.

FAQ

It is an intermediary service that receives AI requests in a unified format and forwards them to upstream model providers such as OpenAI, Anthropic, or Google. The paper says these routers often handle model fallback, load balancing, and cost optimization.

Because the router sees the full request and response in plaintext and can modify tool-call payloads before the client executes them. That gives it power over code execution, dependencies, and secrets.

Yes. The study says 9 routers actively injected malicious code, 17 touched AWS canary credentials, and 1 drained ETH from a test private key.

The paper uses the term for autonomous sessions where tool execution is auto-approved without per-command confirmation. That makes payload tampering more dangerous because the agent may execute the altered command immediately.

Read our disclosure page to find out how can you help VPNCentral sustain the editorial team Read more

User forum

0 messages