LangChain and LangGraph flaws can expose files, secrets, and chat data across AI apps

Three security flaws in LangChain and LangGraph can expose sensitive files, environment secrets, and stored conversation data in AI applications that use the frameworks. Cyera disclosed the issues this week, and maintainers have already shipped patches for the affected packages.

The bugs matter because LangChain and LangGraph sit deep inside many enterprise AI stacks. They often connect models to files, APIs, databases, and memory systems, which means a flaw in the framework can open a path to data far beyond the model itself.

Cyera says the three issues create separate attack paths. One can read files from the host system, another can leak secrets from the environment, and the third can expose or manipulate conversation history stored in SQLite checkpoints.

What the three LangChain and LangGraph CVEs do

The first issue, CVE-2026-34070, is a path traversal bug in legacy load_prompt functions in langchain_core.prompts.loading. GitHub’s advisory says applications that pass user-influenced prompt configurations into these functions can let attackers read arbitrary files on the host, limited mainly by extension checks such as .txt, .json, and .yaml.

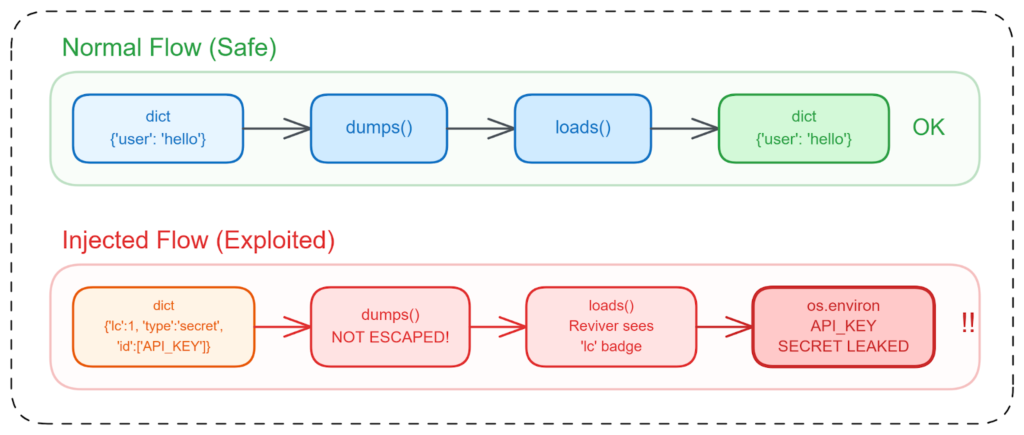

The second flaw, CVE-2025-68664, is the most severe of the three. GitHub says LangChain’s dumps() and dumpd() functions failed to escape free-form dictionaries containing the internal lc marker, which could cause untrusted input to be treated as a real LangChain object during deserialization. That can expose API keys, environment variables, and other secrets when apps deserialize attacker-controlled data.

The third issue, CVE-2025-67644, affects langgraph-checkpoint-sqlite. GitHub’s advisory says attackers who control metadata filter keys can manipulate SQL queries during checkpoint search operations and execute arbitrary SQL against the database. In real deployments, that can expose stored thread state, memory, and conversation history.

Vulnerabilities at a glance

| CVE | Affected component | Main risk | Fixed version |

|---|---|---|---|

| CVE-2026-34070 | langchain-core legacy prompt loading | Arbitrary file read via path traversal | langchain-core 1.2.22+ |

| CVE-2025-68664 | langchain-core serialization | Environment secret leakage through unsafe deserialization | langchain-core 0.3.81 and 1.2.5+ |

| CVE-2025-67644 | langgraph-checkpoint-sqlite | SQL injection in checkpoint searches | langgraph-checkpoint-sqlite 3.0.1+ |

Why the blast radius could be large

The wider concern is scale. PyPIStats currently shows weekly downloads of roughly 53.3 million for langchain, while PyPI lists langchain-core and langgraph as heavily used maintained packages in the same ecosystem. Cyera argues that a flaw in LangChain does not stay contained to one project because hundreds of libraries wrap it, extend it, or depend on it.

That point has become more relevant as AI plumbing has turned into a prime target. These frameworks often run with direct access to prompts, retrieved documents, vector stores, API credentials, and agent memory. A vulnerability in middleware can therefore leak the most valuable data in the application, not just app metadata.

The timing also adds pressure. Another AI framework bug, Langflow’s CVE-2026-33017, went under active exploitation within about 20 hours of disclosure, and CISA added it to the Known Exploited Vulnerabilities catalog on March 25, 2026. That does not mean these LangChain flaws are already under active attack, but it shows how quickly threat actors move once AI framework bugs become public.

What developers should do now

The first step is straightforward. Update the affected packages immediately. For the path traversal bug, move to langchain-core 1.2.22 or later. For the deserialization flaw, use langchain-core 0.3.81 or 1.2.5 or later, depending on your branch. For the SQLite checkpoint issue, upgrade langgraph-checkpoint-sqlite to 3.0.1 or newer.

Teams should also treat all model output and all user-supplied data as untrusted input. That means avoiding legacy prompt loaders with user-controlled paths, restricting or removing unsafe deserialization flows, and validating metadata keys before they reach database-backed checkpoint lookups.

If LangChain sits inside another library, the job is harder. Developers need to audit transitive dependencies, not just direct imports, because affected code paths may exist inside wrappers, internal tools, and agent frameworks built on top of LangChain.

Practical response checklist

- Upgrade

langchain-coreandlanggraph-checkpoint-sqliteto patched versions. - Search your code for

load_prompt()andload_prompt_from_config()usage with user-influenced input. - Review any flow that deserializes dictionaries or serialized LangChain objects from users, agents, or LLM output.

- Lock down metadata filter keys for LangGraph SQLite checkpoint queries.

- Audit downstream wrappers and transitive dependencies that may pull in vulnerable LangChain code paths.

FAQ

CVE-2025-68664 stands out because it can leak environment secrets through unsafe deserialization and carries a CVSS score of 9.3 in public advisories.

Yes. The disclosed risks include filesystem reads, environment secret leakage, and access to stored conversation history in checkpoint databases.

Yes. Maintainers and advisories point to langchain-core 1.2.22+ for CVE-2026-34070, langchain-core 0.3.81 or 1.2.5+ for CVE-2025-68664, and langgraph-checkpoint-sqlite 3.0.1+ for CVE-2025-67644.

I did not find a primary source confirming active exploitation of these three LangChain and LangGraph flaws as of March 31, 2026. I did find confirmed rapid exploitation of the separate Langflow flaw CVE-2026-33017, which shows the broader risk around AI framework vulnerabilities.

Read our disclosure page to find out how can you help VPNCentral sustain the editorial team Read more

User forum

0 messages