Microsoft says hackers now use AI across every stage of the attack chain

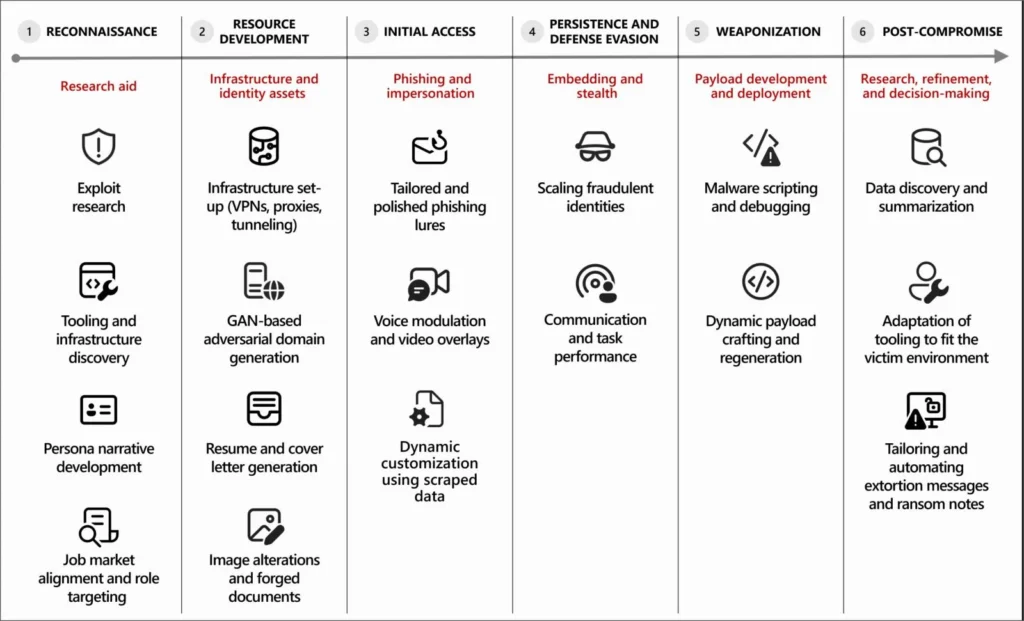

Microsoft says threat actors now use artificial intelligence throughout the cyberattack lifecycle, from early reconnaissance and phishing to malware development, post-compromise research, and data monetization. In a new threat intelligence report, the company says most malicious AI use today involves language models that generate text, code, or media to help attackers move faster and reduce technical friction.

The company stops short of saying AI is fully running attacks on its own. Microsoft says human operators still control targeting, objectives, and deployment decisions. But it also says AI already acts as a force multiplier that helps attackers scale phishing, debug malware, summarize stolen data, research vulnerabilities, and build or refine infrastructure.

That matters because it shifts AI from a side tool to an operational layer inside real campaigns. Microsoft says it has seen this most clearly in North Korean activity, especially operations tied to Jasper Sleet and Coral Sleet, where AI helps create fake identities, tailor resumes to job postings, generate convincing communications, and support long-term abuse of legitimate access.

What Microsoft says attackers are doing with AI

Microsoft says the most common malicious use of AI today centers on language models for producing text, code, or media. In practice, that includes phishing lure generation, content translation, stolen-data summarization, malware scripting and debugging, and infrastructure scaffolding. The report says these uses reduce technical barriers and speed up execution, even when the attacker does not rely on a fully autonomous system.

The report breaks that activity across the full attack chain. During reconnaissance, attackers use AI to research public vulnerabilities, identify possible exploitation paths, and study tools or infrastructure that may help with evasion and scaling. During initial access, Microsoft says AI improves phishing and impersonation by helping attackers adapt tone, language, and persona details more quickly.

Microsoft also says AI now appears after compromise, not just before it. The company says attackers use it to analyze unfamiliar victim environments, summarize logs or directory structures, prioritize lateral movement paths, research persistence options, refine escalation attempts, and sort stolen data for extortion or resale.

North Korean campaigns remain a key example

Microsoft highlights North Korean remote IT worker schemes as one of the clearest real-world examples of operational AI misuse. The company says Jasper Sleet has used generative AI to develop fraudulent digital personas, including prompts to generate culturally appropriate names and matching email address formats. Microsoft also says these actors use AI to review software and IT job postings, extract required skills, and tailor fake identities to fit those roles.

Coral Sleet appears elsewhere in the report as an example of AI-assisted coding and payload work. Microsoft says it observed code with traits consistent with AI-assisted creation, including emoji markers in execution paths and conversational inline comments. The report also says the actor created new payloads by jailbreaking LLM software to produce malicious code that bypassed built-in safeguards.

Microsoft sees early signs of agentic AI abuse

Microsoft says most current malicious use still revolves around generative AI, not autonomous agents. Even so, the company says it is beginning to see early experimentation with agentic AI, where systems help with iterative decision-making and task execution over time. Microsoft says it has not yet seen large-scale threat actor use of agentic AI because of reliability and operational limits, but it treats that trend as a warning sign for future tradecraft.

The same report also points to experimental AI-enabled malware. Microsoft says public reporting has documented malware families that can dynamically generate scripts, obfuscate code, or adapt behavior at runtime using language models. The company says these efforts remain limited and uneven today, but they may point to future malware that changes functionality on demand and reduces the static indicators defenders often rely on.

What this means for defenders

Microsoft’s guidance is practical. The company says defenders should not focus only on strange wording or obviously broken phishing messages, because AI lets attackers produce cleaner, more localized, and more believable content. Instead, Microsoft says detection should focus more on behavior, delivery infrastructure, context, and abnormal credential use.

The company also says organizations should treat remote IT worker abuse and similar schemes as insider-risk problems because they often depend on legitimate access rather than obvious malware. Microsoft recommends stronger identity vetting, tighter access controls, broader auditing, phishing-resistant account protections, and better visibility into AI-related assets and risks across Microsoft Defender, Entra, and Purview.

AI misuse across the attack chain

| Attack stage | What Microsoft says AI helps with |

|---|---|

| Reconnaissance | Vulnerability research, exploit-path analysis, tool and infrastructure research, persona building |

| Initial access | Tailored phishing lures, impersonation, translated content, social engineering support |

| Malware and tooling | Code generation, debugging, payload refinement, infrastructure setup |

| Discovery and lateral movement | Environment analysis, summarizing logs and configs, movement-path prioritization |

| Persistence and privilege escalation | Researching persistence options, adapting scripts, interpreting failed escalation attempts |

| Collection and exfiltration | Finding sensitive data, summarizing files, prioritizing valuable data, refining exfiltration plans |

| Impact and monetization | Summarizing stolen data, shaping extortion strategies, drafting ransom or pressure messages |

Key takeaways

- Microsoft says threat actors now operationalize AI across the cyberattack lifecycle.

- The company says most malicious AI use today involves language models for text, code, or media generation.

- Human operators still make the key decisions, but AI speeds up execution and lowers barriers.

- Jasper Sleet and Coral Sleet appear in the report as real-world examples of AI-enabled tradecraft.

- Microsoft says agentic AI abuse remains early and not yet widespread, but it could become more important later.

FAQ

No. Microsoft says human operators still retain control over targeting, objectives, and deployment decisions. The company describes AI mainly as a force multiplier that speeds up existing tradecraft.

Microsoft says attackers use AI for phishing, malware generation and debugging, vulnerability research, infrastructure setup, post-compromise analysis, and stolen-data triage.

Microsoft highlighted North Korean activity, especially Jasper Sleet and Coral Sleet, as examples of groups using AI in real operations.

Microsoft says no. The company says it sees early experimentation, but not large-scale operational use yet because of reliability and operational constraints.

Read our disclosure page to find out how can you help VPNCentral sustain the editorial team Read more

User forum

0 messages