OpenAI launches GPT-5.4 mini and nano with faster performance for high-volume tasks

OpenAI has launched GPT-5.4 mini and GPT-5.4 nano, two smaller models designed for fast, high-volume workloads where latency matters as much as raw capability. OpenAI says GPT-5.4 mini targets coding, tool use, multimodal reasoning, and subagent workflows, while GPT-5.4 nano focuses on simpler, cost-sensitive tasks such as classification, ranking, data extraction, and lightweight coding.

The key pitch is speed without a major drop in usefulness. OpenAI says GPT-5.4 mini runs more than twice as fast as GPT-5 mini and still comes close to GPT-5.4 on several benchmarks, which makes it a strong fit for responsive coding assistants, real-time multimodal apps, and systems that need quick tool-driven answers.

That balance matters because many production systems do not need the biggest model on every step. OpenAI says developers can use GPT-5.4 for planning and final judgment, then hand off narrower work to GPT-5.4 mini subagents that search codebases, process supporting files, or review large documents in parallel.

GPT-5.4 mini focuses on coding, subagents, and computer use

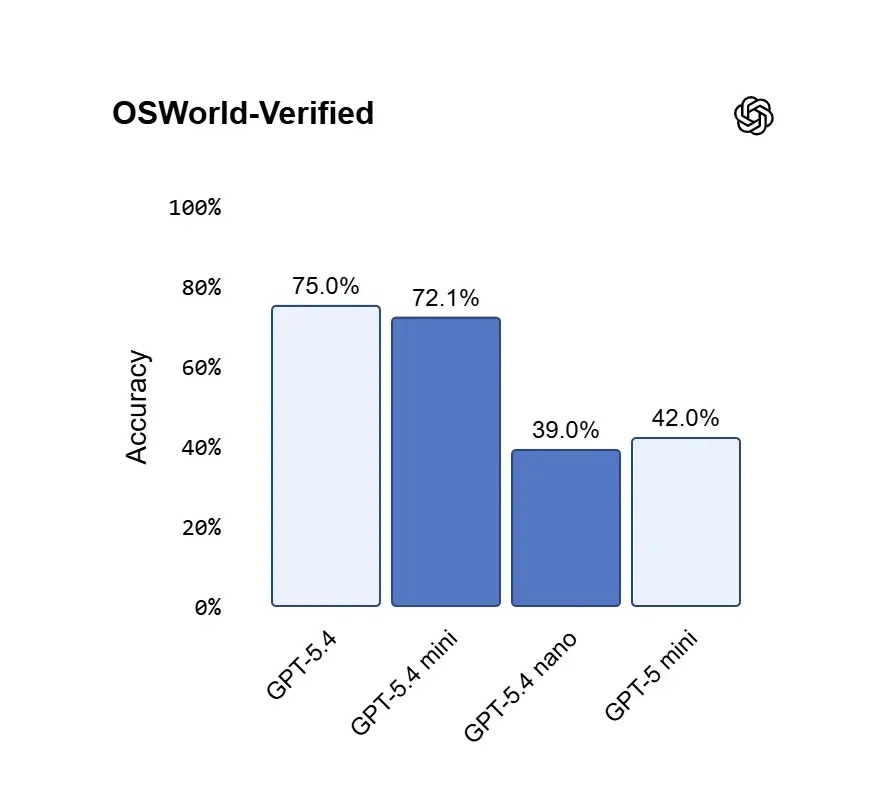

OpenAI positions GPT-5.4 mini as its strongest mini model yet for coding, computer use, and subagents. In the company’s benchmark table, GPT-5.4 mini scores 54.4% on SWE-Bench Pro, 60.0% on Terminal-Bench 2.0, 42.9% on Toolathlon, and 72.1% on OSWorld-Verified. Those results place it well ahead of GPT-5 mini on the same tests and relatively close to full GPT-5.4 in some workloads.

OpenAI also says GPT-5.4 mini works especially well in fast-iteration coding environments. The company highlights targeted edits, codebase navigation, debugging loops, and front-end generation as areas where the smaller model can deliver a strong performance-per-latency tradeoff.

On multimodal and computer-use tasks, GPT-5.4 mini appears especially strong for a smaller model. OpenAI says it can quickly interpret dense screenshots and UI states, and its 72.1% score on OSWorld-Verified comes close to GPT-5.4’s 75.0% while clearly beating GPT-5 mini’s 42.0%.

GPT-5.4 nano is the cheaper option for lightweight work

GPT-5.4 nano sits lower in the stack and aims at speed and cost first. OpenAI describes it as the cheapest GPT-5.4-class model and recommends it for simple high-volume tasks such as classification, extraction, ranking, and subagents.

Nano still supports text and image input, which gives developers a low-cost option for straightforward multimodal pipelines. According to OpenAI’s model page, GPT-5.4 nano also has a 400,000-token context window, matching GPT-5.4 mini on that front.

Availability and pricing

OpenAI says GPT-5.4 mini is available now in the API, Codex, and ChatGPT. In the API, it supports text and image inputs, function calling, web search, file search, computer use, skills, and a 400,000-token context window. Pricing is $0.75 per 1 million input tokens and $4.50 per 1 million output tokens, with cached input priced at $0.075 per 1 million tokens on the pricing page.

OpenAI says GPT-5.4 mini is also available across Codex, including the app, CLI, IDE extension, and web experience. In Codex, it uses 30% of the GPT-5.4 quota, which OpenAI says lets developers handle simpler tasks for about one-third the cost.

In ChatGPT, OpenAI says GPT-5.4 mini is available to Free and Go users through the Thinking option in the plus menu. For other users, it serves as a rate-limit fallback for GPT-5.4 Thinking.

GPT-5.4 nano is API-only for now. OpenAI lists pricing at $0.20 per 1 million input tokens and $1.25 per 1 million output tokens, with cached input at $0.02 per 1 million tokens.

GPT-5.4 mini and nano at a glance

| Model | Best fit | Input price | Output price | Context window | Availability |

|---|---|---|---|---|---|

| GPT-5.4 mini | Coding, tool use, subagents, computer use | $0.75 / 1M | $4.50 / 1M | 400K | API, Codex, ChatGPT |

| GPT-5.4 nano | Classification, extraction, ranking, lightweight coding | $0.20 / 1M | $1.25 / 1M | 400K | API only |

Why this launch matters

This release fills the gap between flagship capability and production speed. OpenAI already positioned GPT-5.4 as its most capable and efficient frontier model for professional work, and mini and nano extend that lineup downward for teams that need more throughput, lower cost, and faster responses without stepping too far down in quality.

The bigger takeaway is practical, not theoretical. OpenAI is pushing a model stack where developers mix sizes depending on the step, rather than using one heavy model for everything. That approach should help teams build faster agents and coding systems while controlling latency and spend. This last point is an inference drawn from OpenAI’s documented subagent and quota guidance.

FAQ

OpenAI says GPT-5.4 mini is best for coding, tool use, subagents, and computer-use tasks where speed matters.

Yes. OpenAI says GPT-5.4 mini runs more than 2x faster than GPT-5 mini.

OpenAI says it is available in the API, Codex, and ChatGPT.

No. OpenAI says GPT-5.4 nano is only available through the API.

Read our disclosure page to find out how can you help VPNCentral sustain the editorial team Read more

User forum

0 messages