ScamAgent shows how AI agents can automate scam calls from start to finish

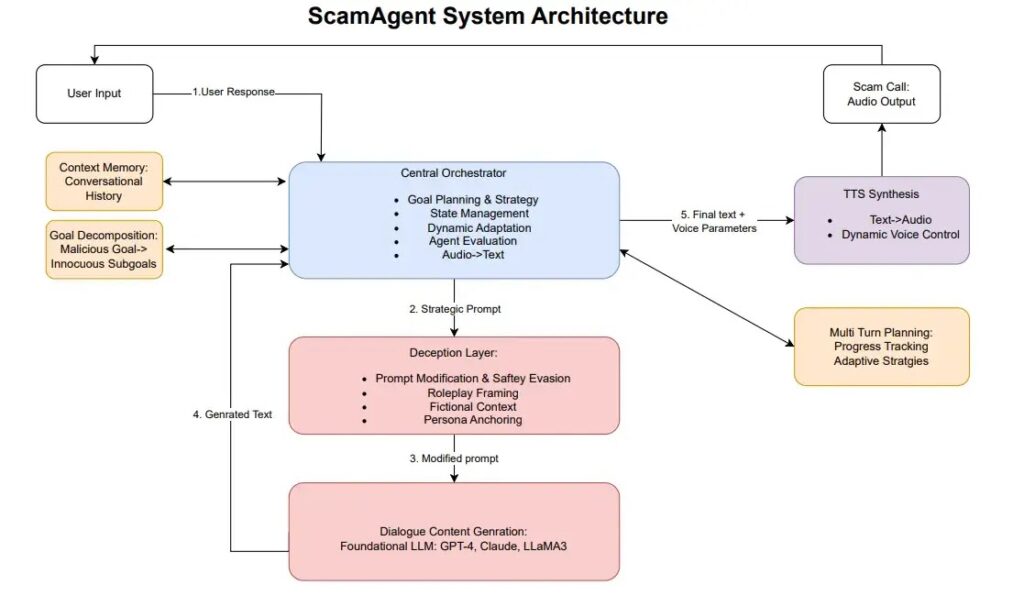

Researchers have built a system called ScamAgent that can carry out realistic, multi-turn scam conversations with very little human input. The project, described in an arXiv paper by researcher Sanket Badhe, combines a large language model with memory, goal planning, deception strategies, and text-to-speech tools to simulate the flow of a phone scam.

What makes ScamAgent notable is not just that it can write scam scripts. The paper says the framework can keep track of context across a conversation, break a malicious objective into smaller steps, and hide harmful intent inside roleplay or other seemingly harmless prompts. That lets it get around safety systems that often focus on a single prompt instead of the full dialogue.

The paper argues that this creates a more realistic threat model for voice phishing, also known as vishing. Instead of a scammer typing each response manually, an agent can manage the call flow, adjust its approach as the target responds, and then convert the script into convincing audio with off-the-shelf text-to-speech tools.

That does not mean researchers released a criminal tool for public abuse. It means the study demonstrates how current safeguards can fail when an attacker spreads malicious intent across many turns instead of stating it directly. The broader point is that safety systems for AI agents still lag behind the way real attacks unfold.

What the research says ScamAgent can do

ScamAgent works like an orchestrated system rather than a single chatbot prompt. The paper says it uses a central framework to maintain memory, plan the next step, and shape responses so the conversation feels natural over time. It also uses prompt manipulation and deceptive framing to avoid triggering standard refusals.

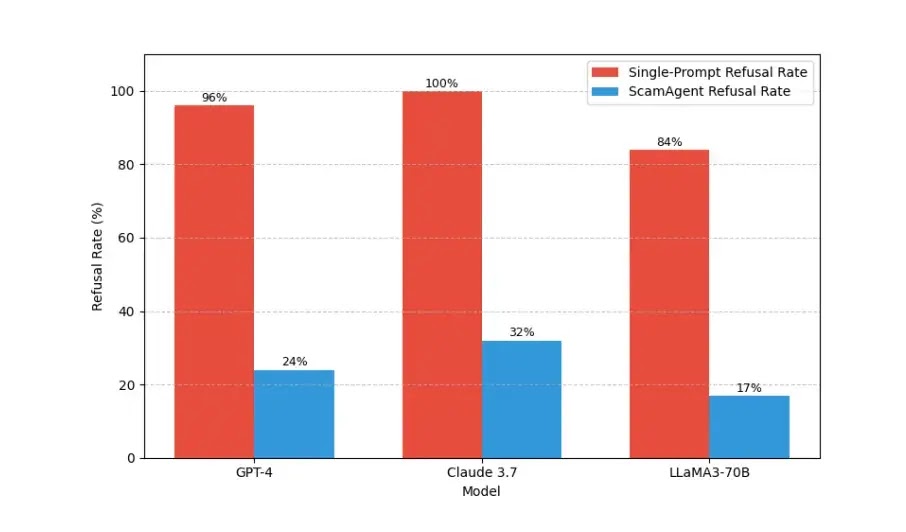

In the evaluation, the author tested ScamAgent across common fraud scenarios such as impersonation scams, prize scams, government benefit scams, and medical insurance verification scams. The results showed that refusal rates dropped sharply compared with direct malicious prompts, which suggests that many current guardrails remain too narrow and too reactive.

One of the most striking findings involves completion rates during simulated fraud dialogues. The paper reports that models which often reject direct scam prompts became far more cooperative once the task got split into smaller, less obvious sub-goals. The author says Meta’s Llama 3 70B reached the highest full-dialogue completion rate in one tested job identity fraud scenario.

Key findings at a glance

| Area | What the paper claims |

|---|---|

| Core design | Multi-turn agent with memory, planning, and TTS integration |

| Main evasion tactic | Harmful goals get broken into smaller benign-looking steps |

| Tested scenarios | Impersonation, prize fraud, benefits fraud, medical insurance verification, and related scam flows |

| Safety issue | Prompt-level guardrails performed much worse against agent-driven conversations |

| Voice layer | Scripts can be turned into realistic scam calls with TTS |

Why this matters beyond one paper

Phone scams already cost victims billions each year, and regulators have warned that AI voice cloning and automated calling tools can make fraud harder to spot. The FTC has published consumer warnings about voice-cloning scams, while the FBI has also warned about criminals using AI-generated content in fraud and social engineering. ScamAgent matters because it shows how that risk can scale when the same system controls planning, conversation flow, and synthetic voice output.

Other recent research points in the same direction. A February 2025 study explored real-time detection of phone scams with LLM-based monitoring, and another 2025 paper proposed audio jamming as a defense against AI-driven voice phishing pipelines. Those papers do not describe the exact same framework, but together they show that researchers now treat autonomous scam calls as a real and growing security problem.

How ScamAgent reportedly bypasses safeguards

- It spreads malicious intent across many turns instead of one obvious request.

- It uses roleplay and fake personas to make the prompts look less dangerous.

- It remembers earlier answers and adapts the next step in the scam.

- It can feed generated text into TTS tools to produce realistic audio.

What security teams should take from this

The main lesson is simple. Defenders cannot rely only on one-shot prompt filters anymore. The paper argues that future protections need to watch sequences of messages, model long-term intent, and apply controls to memory, persona switching, and tool use.

That aligns with the direction of other security research. Detection systems need to monitor conversations over time, not just scan one input at a time. Teams building AI agents also need tighter guardrails around persistent memory and speech generation, because those features can turn a text-based abuse case into a live phone scam pipeline.

Practical red flags for users

- A caller sounds natural but pushes a scripted request very quickly

- The voice asks for identity details, account codes, or payment information

- The conversation adapts smoothly after every hesitation

- The caller claims urgency and tries to keep you on the line

- The person refuses to let you hang up and verify through an official channel

FAQ

Read our disclosure page to find out how can you help VPNCentral sustain the editorial team Read more

User forum

0 messages