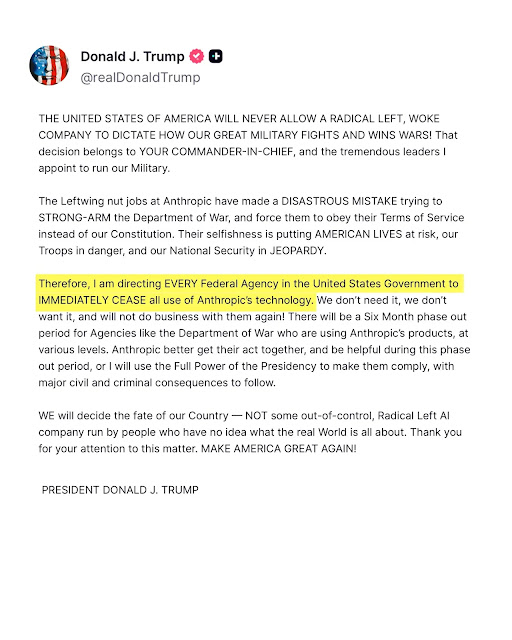

Trump Bans Anthropic AI in Federal Agencies- Pentagon Brands Claude a Supply‑Chain Risk

The U.S. government has ordered all federal agencies to stop using AI provider Anthropic and its Claude model, declaring the company a supply‑chain risk to national security. President Donald Trump issued the directive on February 28, 2026, requiring an immediate halt to government use of Anthropic technology, with a six‑month phase‑out period for the Department of War and other heavily integrated agencies.

Shortly after, Defense Secretary Pete Hegseth announced that Anthropic is now formally designated a “Supply‑Chain Risk to National Security,” barring any contractor, supplier, or partner that does business with the U.S. military from engaging in commercial activity with the company. The Pentagon says this classification is normally reserved for entities linked to foreign adversaries, such as Huawei.

What the ban covers

The Trump and Defense Department actions are specifically aimed at federal use and defense‑related supply chains. The order instructs:

- All federal agencies to immediately cease using Anthropic’s AI tools, including Claude.

- The Department of War to wind down existing deployments over six months.

- DoD‑linked contractors and service providers to stop working with Anthropic or lose access to defense business.

Anthropic notes that the supply‑chain designation applies under 10 U.S. Code § 3252, which limits the scope to Department of War contracts and does not, on its face, prohibit commercial use by civilian customers, API consumers, or non‑DoD organizations. The company has stated that it will challenge the designation in court if necessary.

Why the conflict erupted

The standoff centers on two specific use‑case restrictions that Anthropic demanded in its military contracts:

- A ban on the use of Claude for mass domestic surveillance of U.S. citizens.

- A ban on integrating Claude into fully autonomous weapons systems without human oversight.

The Pentagon sought broader access to Claude for “all lawful purposes,” including these categories. After months of private negotiations, the parties failed to reach an agreement. The Defense Department reportedly issued an ultimatum on February 27, telling Anthropic it must accept the new terms by 5:01 PM ET or face being blacklisted as a supply‑chain risk.

Anthropic argued that current frontier AI models are not reliable enough for autonomous weapons and that mass surveillance would violate U.S. civil‑rights protections. In a public statement, CEO Dario Amodei said the company “cannot in good conscience accede” to the Pentagon’s demands.

Legal and commercial implications

The supply‑chain designation has two main consequences:

- Federal contracts: Agencies and contractors tied to the Department of War cannot use or supply Anthropic technology.

- Cloud‑provider risk: Anthropic relies on infrastructure from Amazon, Microsoft, and Google, all of which hold major defense contracts. A strict reading of the Pentagon’s language could, in theory, threaten those cloud relationships.

Legal experts have warned that stretching this tool beyond its original intent—aimed at foreign‑linked entities—creates a dangerous precedent. Some analysts call it a political move that could chill other U.S. AI firms from pushing safety boundaries in defense contracts.

Trump has warned that Anthropic could face “major civil and criminal consequences” if it does not cooperate during the phase‑out period. Anthropic has replied that it remains committed to lawful national‑security applications and will support a smooth transition for existing U.S. military operations while maintaining its red lines on autonomous weapons and mass surveillance.

Impact on the AI industry

Anthropic’s Claude had become one of the first frontier‑model AI platforms deployed on U.S. government‑classified networks under a roughly $200 million DoW contract awarded in 2024. The ban removes that presence and signals to other AI vendors that resistance to unrestricted military use may carry severe consequences.

At the same time, analysts say the move could benefit competitors such as OpenAI, Google’s Gemini team, and Elon Musk’s Grok, all of which the Pentagon is already evaluating or integrating for defense‑related AI work.

Key points in brief

| Aspect | What happened |

|---|---|

| Presidential order | Trump directs all federal agencies to immediately stop using Anthropic tech. |

| Phase‑out period | Six months for the Department of War and other embedded users. |

| Pentagon designation | Anthropic labeled a “Supply‑Chain Risk to National Security.” |

| Core dispute | Mass surveillance and autonomous‑weapons safeguards. |

| Customer impact | Civilian API users and non‑DoD customers are not directly covered by the ban. |

| Legal challenge expected | Anthropic says it will contest the supply‑chain ruling in court. |

What organizations should do now

For organizations and developers currently using Claude in U.S. government or defense‑linked systems:

- Audit usage: Map all workflows that rely on Anthropic models in federal or DoD‑adjacent environments.

- Plan migration: Identify alternative AI platforms aligned with the new policy and budget for model retraining.

- Review contracts: Ensure any cloud or software agreements do not violate the Pentagon’s supply‑chain restriction.

- Monitor legal updates: Track court filings and any new DoD guidance about AI‑vendor eligibility.

More broadly, the episode highlights the growing tension between AI safety, civil liberties, and the military’s appetite for powerful, largely uncontrolled AI. It also sets a new precedent for how the U.S. government can lever contract and security tools to shape the direction of domestic AI development.

FAQ

President Trump ordered all federal agencies to immediately stop using Anthropic’s AI technology, including the Claude models, with a six‑month grace period for the Department of War.

The Pentagon designated Anthropic a supply‑chain risk after the company refused to allow the Pentagon to use Claude for mass domestic surveillance and fully autonomous weapons without human control.

The formal supply‑chain restriction is tied to 10 U.S.C. § 3252, which focuses on Department of War contracts. Anthropic says individual customers, API users, and non‑DoD partners remain unaffected.

Yes, Anthropic has stated it plans to challenge the supply‑chain classification in court, arguing it overreaches the legal scope and targets a U.S. AI company on policy grounds.

The move signals that the U.S. may use the same supply‑chain tools to pressure or exclude domestic AI vendors over ethical or safety disagreements, potentially reshaping defense‑AI contracting.

Read our disclosure page to find out how can you help VPNCentral sustain the editorial team Read more

User forum

0 messages