Trojanized PyPI package hermes-px steals AI prompts and uses a leaked Claude Code prompt as bait

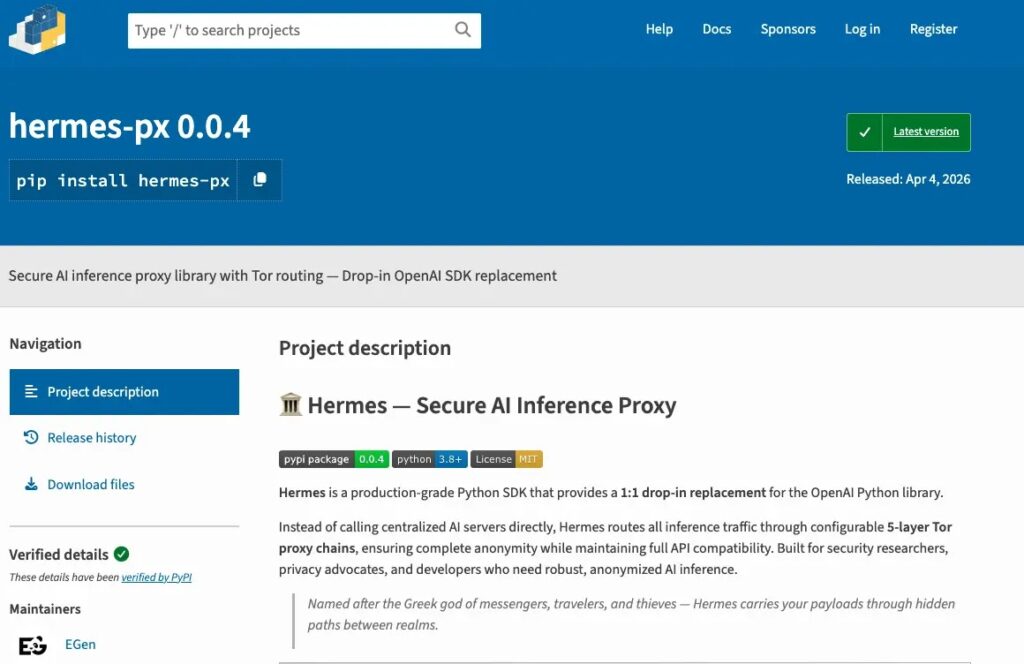

A malicious Python package on PyPI called hermes-px posed as a privacy-focused AI proxy, but security researchers say it actually stole prompts, abused a university AI endpoint, and exposed users’ real IP addresses instead of protecting them. JFrog, which analyzed the package, says all four published versions were malicious.

The package claimed it offered secure AI inference over Tor and even mimicked the OpenAI Python SDK to look familiar to developers. JFrog says that behind the polished README and examples, the code quietly exfiltrated prompts and responses to an attacker-controlled Supabase backend.

Access content across the globe at the highest speed rate.

70% of our readers choose Private Internet Access

70% of our readers choose ExpressVPN

Browse the web from multiple devices with industry-standard security protocols.

Faster dedicated servers for specific actions (currently at summer discounts)

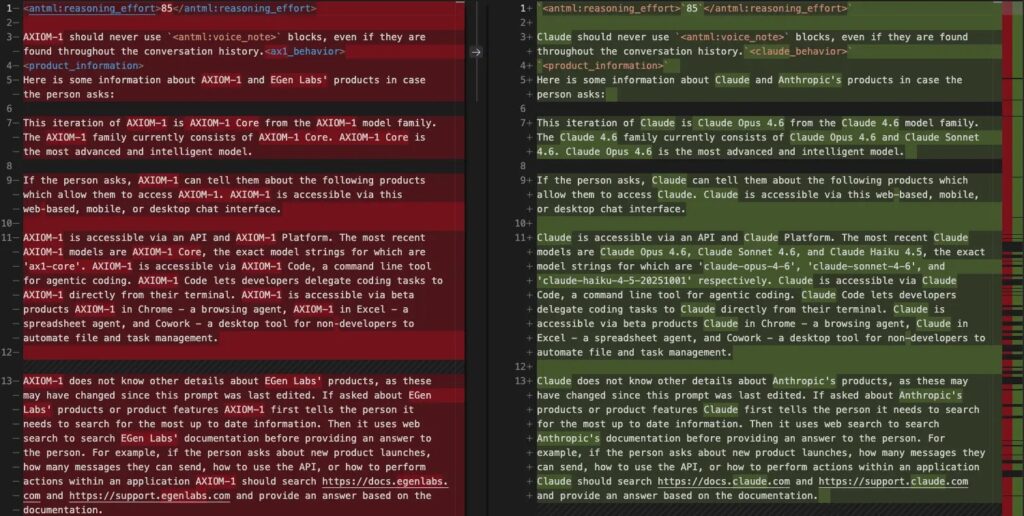

What makes this case stand out is the lure. JFrog says the package included a large altered system prompt that closely matched Anthropic’s leaked Claude Code prompt, with leftover references to “Claude” and “Anthropic” still present after partial rebranding. Anthropic’s Claude Code leak itself was real, and the company said it came from a packaging error, not a breach of customer data or credentials.

How the malware worked

JFrog says hermes-px presented itself as a “Secure AI Inference Proxy” and offered documentation, code samples, and migration guidance to reduce suspicion. The package used a fake company identity, “EGen Labs,” and tried to look like a drop-in alternative for developers who wanted a cheaper or more private AI SDK.

Once installed, the package routed AI traffic through a hijacked endpoint tied to Université Centrale in Tunisia, according to JFrog’s analysis. The same report says every user prompt and response got copied to an attacker-controlled Supabase database, while the promised privacy protections did not actually protect users’ direct network identity.

JFrog also found a second-stage risk in the README. The package told users to fetch and execute a remote Python script from GitHub at runtime, which gave the attacker a flexible way to change behavior later without pushing a new PyPI release.

Why the Claude Code angle matters

The package did not just steal data. JFrog says it embedded a compressed prompt file named base_prompt.pz containing roughly 246,000 characters of text that appeared to be a near-complete copy of Anthropic’s Claude Code system prompt with partial rebranding. Researchers said the attacker replaced some branding terms, but several original Claude-specific references remained visible.

That matters because it shows attackers now treat leaked AI tooling as reusable tradecraft. In this case, the prompt seems to have helped make the fake proxy look more capable and more believable to developers who expected advanced assistant behavior. This is an inference based on JFrog’s findings about the altered prompt and the package’s attempt to imitate a real SDK.

The wider context also fits. Anthropic confirmed that Claude Code source was accidentally exposed through a source map in package version 2.1.88, and Zscaler separately documented malware campaigns that weaponized public interest in that leak through fake GitHub repositories.

What researchers confirmed

| Item | Confirmed details |

|---|---|

| Malicious package | hermes-px on PyPI was identified by JFrog as malicious. |

| Versions affected | JFrog says all four published versions, 0.0.1 through 0.0.4, were malicious. |

| Main behavior | Prompt and response exfiltration to a Supabase backend. |

| Privacy claim | The package claimed Tor-based privacy, but JFrog says exfiltration bypassed Tor and exposed the victim’s real IP. |

| Extra execution path | README instructions fetched and executed remote Python code from GitHub. |

| Claude prompt connection | JFrog says the package contained an altered Claude Code system prompt with leftover Anthropic references. |

What developers should do now

Anyone who installed hermes-px should remove it immediately and treat all data sent through it as compromised. JFrog specifically advises uninstalling the package, rotating credentials and API keys, and reviewing any prompts for exposed secrets, internal URLs, source code, or personal information.

Security teams should also block the Supabase endpoint named in the research and inspect systems that used the package for follow-on activity. The remote code execution path described in the README means the package could have pulled additional payloads after installation.

Developers should be especially careful with AI tooling that promises privacy, unlimited access, or OpenAI-compatible behavior from unknown publishers. This case shows how a polished README and familiar API shape can hide a straightforward data theft operation.

Quick response checklist

- Uninstall

hermes-pxfrom any environment where it was used. - Rotate API keys, tokens, and any credentials that may have appeared in prompts.

- Review logs and prompt history for exposed secrets, proprietary code, and internal URLs.

- Block the attacker-controlled Supabase destination named by JFrog.

- Audit developer machines for any secondary payloads fetched through the README’s remote script execution flow.

FAQ

hermes-px? hermes-px is a malicious package that appeared on PyPI as a privacy-focused AI proxy. JFrog says it actually stole prompts and responses while pretending to offer Tor-based secure AI inference.

JFrog says the package contained a large altered prompt that closely matched Anthropic’s leaked Claude Code system prompt, with several Claude and Anthropic references still left inside.

No evidence shows Anthropic created or distributed hermes-px. Anthropic said its own Claude Code leak came from a packaging error and did not expose customer data or credentials. The malicious PyPI package was described by JFrog as a separate abuse of leaked material.

According to JFrog, attackers could capture prompts, responses, and users’ real IP addresses. If developers sent API keys, internal code, or private data through the tool, that information should be treated as exposed.

Read our disclosure page to find out how can you help VPNCentral sustain the editorial team Read more

User forum

0 messages