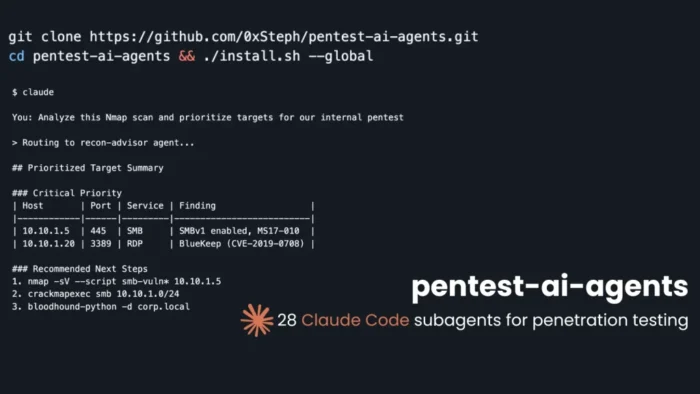

pentest-ai-agents turns Claude Code into a specialist pentesting assistant

pentest-ai-agents is an open-source toolkit that turns Claude Code into a security testing assistant with specialist subagents for authorized penetration testing. The project was first widely described as a 28-agent collection, but its GitHub page now lists 31 Claude Code subagents after a newer v3.1 update.

The toolkit comes from security researcher 0xSteph and focuses on structured pentest workflows rather than a single general chatbot. Its agents cover areas such as reconnaissance, web testing, Active Directory, cloud, mobile, wireless, social engineering, payload crafting, reverse engineering, exploit chaining, detection engineering, forensics, and reporting.

Access content across the globe at the highest speed rate.

70% of our readers choose Private Internet Access

70% of our readers choose ExpressVPN

Browse the web from multiple devices with industry-standard security protocols.

Faster dedicated servers for specific actions (currently at summer discounts)

The main idea is simple. Instead of asking one AI assistant to handle every security task, Claude Code can route a request to a more focused subagent with a dedicated prompt, tool assumptions, and testing methodology. Anthropic’s own Claude Code documentation says subagents can specialize behavior, preserve context, enforce tool limits, and route tasks to cheaper models such as Haiku when suitable.

What pentest-ai-agents includes

The repository describes pentest-ai-agents as a collection of Claude Code subagent definitions for authorized penetration testing. Users install the agent files, open Claude Code, describe the task, and let Claude route the request to the relevant specialist.

The latest GitHub description lists 31 agents, with three newer additions in v3.1: payload-crafter, reverse-engineer, and phishing-operator. The update also adds slash commands for agent recommendation and catalog filtering, plus a tool-audit helper that checks which local security tools are installed.

That means the project has already moved beyond the original 28-agent framing. Any current coverage should mention the expansion so readers do not walk away with outdated numbers.

At a glance

| Item | Details |

|---|---|

| Project name | pentest-ai-agents |

| Creator | 0xSteph |

| Platform | Claude Code |

| Current agent count | 31 subagents |

| Earlier public framing | 28 subagents |

| Main use | Authorized penetration testing support |

| Agent style | Domain-specific security assistants |

| Setup model | Local Claude Code agent files |

| Related project | pentest-ai MCP server and CLI |

| License | MIT, according to the related pentest-ai repository |

How the agents are organized

The toolkit separates work by domain. A recon task can route to a recon-focused agent, while a web app test can route to a web testing agent. Active Directory, cloud, mobile, wireless, exploit research, detection engineering, malware analysis, and reporting each get more focused handling.

This structure matters because penetration tests usually involve different disciplines. A web application tester, a cloud reviewer, and an Active Directory tester often think about evidence, risk, and next steps differently.

Claude Code’s subagent model supports that approach. Anthropic says Claude uses a subagent’s description to decide when to delegate a task, and custom subagents can include focused prompts, tool restrictions, permission modes, hooks, and skills.

Installation and workflow

The project says setup does not require a separate server or Python dependency stack for the subagent definitions. It copies the agent files into Claude Code’s agent directory, after which Claude Code can use them during a session.

The repository also lists options for project-scoped installation, global installation, a lighter mode that uses Haiku for advisory agents, and an optional tools installer. The tools option checks or installs underlying command-line utilities, which makes it more sensitive from a security and governance perspective.

For enterprise use, teams should review the agent files and installation script before deployment. They should also decide which actions require approval, which tools can run, and which networks the testing environment can reach.

Why the Claude Code model matters

Claude Code already includes a permission-based architecture. Anthropic says it uses read-only permissions by default and requests explicit approval when additional actions such as editing files, running tests, or executing commands are needed.

That approval model fits pentesting better than a fully autonomous tool with no human review. A tester can use agents to plan, analyze findings, explain tool output, draft reports, or propose next steps, while still keeping control over commands and scope.

Anthropic’s security documentation also says users remain responsible for reviewing proposed code and commands before approval. That is an important point for offensive security work, where a mistaken command can affect production systems or exceed an engagement scope.

The safety question

Tools like pentest-ai-agents sit in a sensitive category. They can help legitimate security teams, but they also package offensive workflows in a way that could cause harm if used outside authorized environments.

Anthropic has also acknowledged this broader risk. In its usage policy update, the company said agentic tools introduce risks including scaled abuse, malware creation, and cyberattacks, while still supporting vulnerability discovery with the system owner’s consent.

That makes scope control essential. Teams using this toolkit should define the target range, testing window, allowed tools, prohibited actions, evidence requirements, and approval process before using any command-running agent.

Related MCP ecosystem

pentest-ai-agents also connects conceptually to 0xSteph’s separate pentest-ai project. That project describes itself as an MCP server and Python agent system with more than 150 security tools, exploit chaining, and proof-of-concept validation.

The pentest-ai repository describes pentest-ai-agents as Claude Code subagent definitions for the same methodology. In other words, one project focuses on Claude Code agent roles, while the other expands toward an MCP and tool-wrapper ecosystem.

That distinction matters for readers. pentest-ai-agents is not simply another scanner. It is a set of Claude Code specialists that can sit above security tools, help interpret output, and guide testing workflows.

What security teams should consider

Before using the toolkit in a professional engagement, teams should answer a few operational questions:

- Is the test authorized in writing?

- Which targets are in scope?

- Which tools can the agents recommend or run?

- Who approves commands before execution?

- Where will logs, findings, and evidence be stored?

- Can the environment access production systems?

- Are credentials isolated from the AI workspace?

- Can the team audit what the agent suggested and what the operator approved?

Anthropic’s secure deployment guidance recommends isolation, least privilege, and defense in depth for agent deployments. It also warns that agents can take unintended actions because of prompt injection, model error, or malicious content they process.

Why this matters for pentesters

pentest-ai-agents points to where AI-assisted security testing is heading. The value is not just faster answers. It is structured support across a full engagement, from scoping and recon analysis to evidence review and report writing.

For junior testers, the agents can provide methodology and next-step guidance. For experienced testers, they can reduce repetitive work, summarize tool output, and maintain a more consistent testing process.

The tool does not replace authorization, skill, or judgment. It gives pentesters a more organized Claude Code setup for work they already have permission to perform.

FAQ

pentest-ai-agents is an open-source collection of Claude Code subagents built for authorized penetration testing workflows. The project currently lists 31 subagents on GitHub.

Earlier coverage described the toolkit as a 28-agent collection. The GitHub repository now lists 31 agents after a v3.1 update that added payload-crafter, reverse-engineer, and phishing-operator.

The repository supports advisory and tool-oriented workflows, but Claude Code’s own security model uses permissions and user approval for command execution. Operators still need to review commands and keep activity inside an authorized scope.

It is best suited for security professionals, red teams, consultants, bug bounty researchers, and students working in authorized labs or approved engagements.

Read our disclosure page to find out how can you help VPNCentral sustain the editorial team Read more

User forum

0 messages