AI-powered threat actors are speeding up zero-day discovery and exploitation

Threat actors are using AI to move faster across the cyberattack chain, from reconnaissance and vulnerability research to malware development and data theft.

The biggest risk is speed. AI tools can help attackers scan targets, summarize exposed services, write exploit code, test attack paths, and adjust tactics with less manual work.

Access content across the globe at the highest speed rate.

70% of our readers choose Private Internet Access

70% of our readers choose ExpressVPN

Browse the web from multiple devices with industry-standard security protocols.

Faster dedicated servers for specific actions (currently at summer discounts)

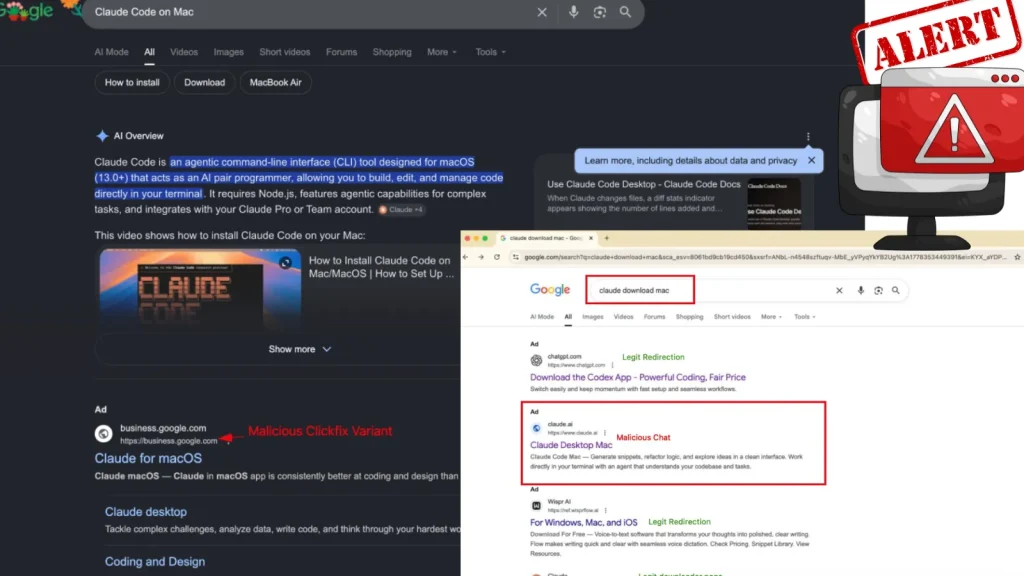

Public evidence shows a fast shift from AI-assisted phishing and coding help to more advanced activity, including AI-orchestrated intrusion attempts and malware that can generate or modify code at runtime.

Why this changes the zero-day threat model

Zero-day exploitation has traditionally required deep skill, time, and a strong research process. AI does not remove those requirements completely, but it can reduce the time needed for many steps around discovery and exploitation.

Attackers can use AI to review code, identify suspicious patterns, generate proof-of-concept logic, automate scanning, and explain why an exploit failed. That gives even mid-level operators more room to test targets quickly.

The more urgent lesson for defenders is simple: patching still matters, but containment speed matters more when attackers can move from access to lateral movement in minutes.

At a glance

| Area | How AI helps attackers | Risk for organizations |

|---|---|---|

| Reconnaissance | Summarizes targets, exposed services, leaked data, and likely entry points | Attackers can profile victims faster |

| Vulnerability research | Reviews code, suggests weak logic, and assists exploit development | Flaws may move from discovery to testing more quickly |

| Malware development | Generates code, modifies scripts, and helps build payloads | Static detection becomes less reliable |

| Social engineering | Creates targeted messages, personas, and multilingual lures | Phishing becomes more convincing and scalable |

| Post-compromise activity | Assists with command generation, data sorting, and lateral movement | Incident response windows shrink |

AI-orchestrated intrusion is no longer theoretical

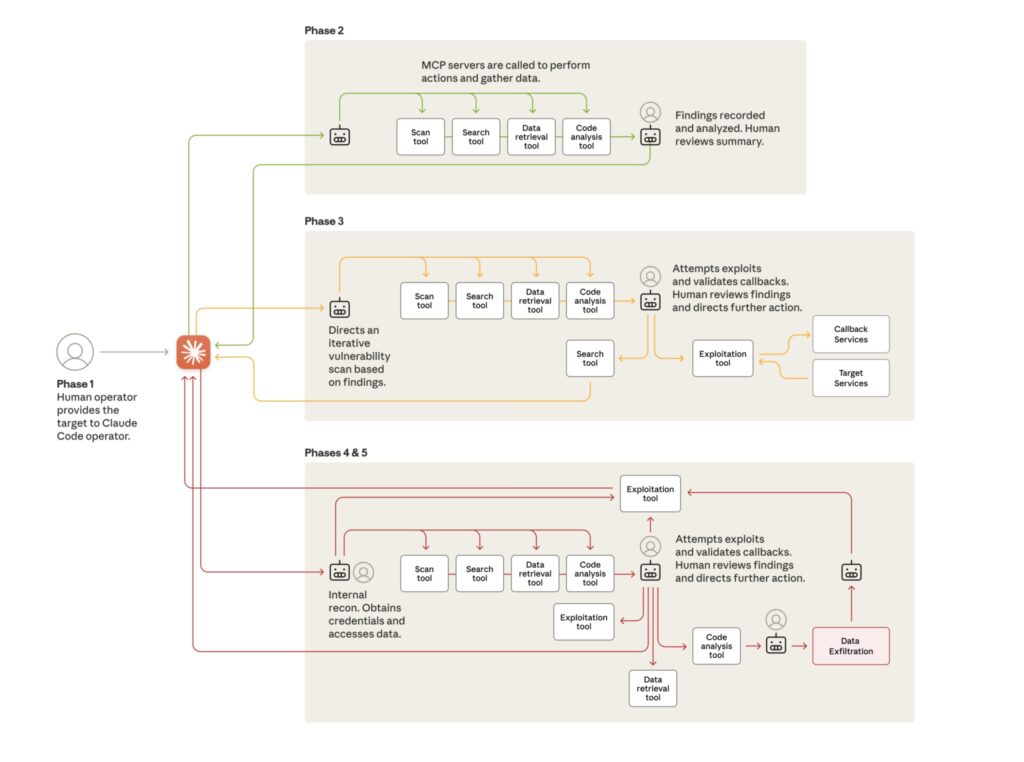

One of the clearest public examples came from Anthropic, which said a likely Chinese state-sponsored group manipulated Claude Code during a large-scale cyber espionage campaign.

Anthropic said the actor attempted to infiltrate roughly 30 global targets, including technology companies, financial institutions, chemical manufacturers, and government agencies. A small number of intrusions succeeded.

MITRE later listed the activity as the Anthropic AI-orchestrated Campaign. The entry says operators used Claude Code agents and Model Context Protocol tools to help automate reconnaissance, vulnerability discovery, exploitation, lateral movement, credential harvesting, data analysis, and exfiltration.

Attackers are also experimenting with AI-powered malware

Google’s Threat Intelligence Group reported PROMPTFLUX in 2025, an experimental VBScript dropper that interacted with Gemini’s API to request new obfuscation and evasion techniques.

The purpose was not just to write malware once. PROMPTFLUX showed how malware can use an LLM during execution to change its own code and make static signatures less useful.

SentinelLABS also reported MalTerminal, an LLM-enabled malware sample that used GPT-4 to dynamically generate ransomware code or a reverse shell. Researchers said the sample likely predates November 2023, making it one of the earliest known examples of this malware category.

Attack speed is already shrinking response windows

CrowdStrike’s 2026 Global Threat Report found that the average eCrime breakout time fell to 29 minutes in 2025. The fastest observed breakout took only 27 seconds.

The company also reported an 89% increase in attacks from AI-enabled adversaries. It said attackers used AI for social engineering, information operations, malicious prompt activity, and faster operational workflows.

This does not mean every cybercriminal can now create reliable zero-days on demand. It does mean defenders should expect more automation, faster testing, and more attempts against exposed systems.

What security teams should watch

- Unexpected AI API traffic from servers, endpoints, scripts, or developer machines.

- Hardcoded API keys inside binaries, scripts, archives, and malware samples.

- Prompt-like JSON structures inside suspicious executables or scripts.

- New binaries that generate code at runtime instead of carrying a static payload.

- Unusual PowerShell, Python, VBScript, Bash, or Node.js activity after initial access.

- Unexpected SMB admin share access, credential dumping attempts, and remote service creation.

- High-entropy DNS queries and unusual outbound connections to model APIs or proxy services.

- Fast privilege escalation or lateral movement shortly after a suspicious login.

Defenders need to shift from detection to containment

Traditional security programs often focus on detecting an attack, opening a ticket, investigating the alert, and then deciding how to respond. That process breaks down when attackers move in minutes.

Organizations should measure how quickly they can isolate an endpoint, revoke a session, disable a compromised account, block a command-and-control path, and stop file movement.

Mean time to contain should become a board-level metric. AI-assisted attackers can scale activity quickly, so slow escalation paths give them more time to turn one access point into a wider breach.

Practical defensive steps

- Patch internet-facing systems first, especially VPNs, firewalls, file transfer tools, and web management panels.

- Reduce exposed services and close unused admin interfaces.

- Apply least privilege to service accounts, developer tokens, and automation tools.

- Monitor AI API usage across endpoints, servers, CI/CD systems, and cloud workloads.

- Create detection rules for embedded API keys, prompt structures, and suspicious model calls.

- Harden identity systems with phishing-resistant MFA and session revocation workflows.

- Use deception assets that can trigger alerts when attacker automation scans the environment.

- Practice rapid isolation drills so teams can contain threats without waiting for long approvals.

Why AI makes old attack paths more dangerous

Most attackers still depend on familiar methods, including phishing, stolen credentials, exposed services, and known vulnerabilities. AI makes those methods cheaper, faster, and easier to scale.

OpenAI has also reported that threat actors often combine AI models with traditional tools rather than relying on AI alone. That pattern makes the threat harder to frame, because the AI sits inside a larger workflow.

For defenders, this means security teams should not focus only on futuristic AI malware. They also need better visibility into normal attack paths that AI can accelerate.

FAQ

No. Antivirus and endpoint detection still help, but defenders need behavior-based detection, network monitoring, identity controls, and rapid containment because AI-generated or modified code can weaken static signatures.

LLM-enabled malware uses a large language model as part of its behavior. Instead of carrying every malicious function in static code, it may call a model to generate commands, payloads, or modified code during runtime.

AI-orchestrated cyber espionage uses AI agents to complete parts of an intrusion workflow, such as scanning, vulnerability discovery, exploitation, credential harvesting, and data analysis, while human operators guide the campaign.

Yes, threat actors are using AI to support vulnerability research and exploitation workflows. Public evidence shows AI helping with reconnaissance, code analysis, exploit assistance, and attack automation, but reliable fully autonomous zero-day discovery remains a developing risk.

Read our disclosure page to find out how can you help VPNCentral sustain the editorial team Read more

User forum

0 messages