Hackers abuse Hugging Face and ClawHub to spread malware through AI tools

Hackers are abusing trusted AI platforms, including Hugging Face and ClawHub, to distribute malware disguised as models, datasets, and agent skills. The campaign shows how attackers are moving beyond classic software repositories and targeting the AI tools developers now use every day.

Acronis Threat Research Unit found 575 malicious OpenClaw skills on ClawHub across 13 developer accounts. The same research also found Hugging Face repositories being used to host payloads and support multi-stage infection chains for Windows, Linux, and Android malware.

Access content across the globe at the highest speed rate.

70% of our readers choose Private Internet Access

70% of our readers choose ExpressVPN

Browse the web from multiple devices with industry-standard security protocols.

Faster dedicated servers for specific actions (currently at summer discounts)

The activity does not mean Hugging Face or ClawHub were fully breached. Instead, attackers abused the trust users place in public AI ecosystems. They uploaded convincing files, skill descriptions, and setup instructions that pushed users or AI agents toward malicious downloads and commands.

Why this campaign matters

AI repositories are becoming part of the software supply chain. Developers download models, datasets, scripts, and skills from these platforms because they want to move quickly. That trust creates a large attack surface.

In ClawHub, the risk comes from OpenClaw skills. These extensions can expand what an AI agent can do, but they can also include instructions that lead the agent or the user toward unsafe actions.

On Hugging Face, attackers used repositories as staging infrastructure. That means the platform was not only used to share AI files, but also to host malware or supporting scripts inside larger infection chains.

At a glance

| Category | Details |

|---|---|

| Main platforms abused | Hugging Face and ClawHub |

| Research source | Acronis Threat Research Unit |

| Malicious ClawHub skills found | 575 |

| Developer accounts involved | 13 |

| Top account | hightower6eu with 334 malicious skills |

| Second account | sakaen736jih with 199 malicious skills |

| Payloads observed | Trojans, cryptominers, infostealers, loaders, RATs, and AMOS Stealer |

| Targeted systems | Windows, macOS, Linux, and Android |

How attackers abused ClawHub

ClawHub is the public registry for OpenClaw skills and plugins. OpenClaw users can install skills to give their AI agent new abilities, such as summarizing content, handling tasks, or interacting with external services.

Acronis found 575 malicious skills in the OpenClaw ecosystem. Two accounts drove most of the activity. The account hightower6eu uploaded 334 malicious skills, while sakaen736jih uploaded 199.

Some skills pretended to offer useful features, such as summarizing YouTube transcripts. However, the instructions inside the skill files pushed users toward password-protected archives, unverified installers, or encoded commands.

Indirect prompt injection increases the risk

A major concern in this campaign is indirect prompt injection. In simple terms, attackers hide instructions inside files or skill definitions that an AI agent may later read and follow.

This makes the attack different from a normal fake installer. The attacker is not only trying to fool a human user. The attacker is also trying to manipulate the agent’s workflow by placing malicious instructions where the agent expects useful guidance.

Acronis said the agents were not necessarily broken. They were following instructions from untrusted skills. That is what makes the issue so dangerous for agentic AI tools that can run commands, download files, or interact with a local system.

What malware was delivered through ClawHub

The ClawHub campaign targeted both Windows and macOS users. For Windows, Acronis observed trojanized payloads packed with VMProtect, a tool often used to make malware harder to analyze.

For macOS, the malicious instructions used base64-encoded commands to contact an external IP address and download payloads. One payload was AMOS Stealer, a macOS infostealer often used to steal browser data, crypto wallet data, passwords, and files.

Acronis also described a Windows payload that decrypted strings at runtime, resolved native Windows APIs, injected code into explorer.exe, and communicated with attacker infrastructure over HTTPS. The campaign also used persistence through scheduled tasks and Defender exclusion paths.

How Hugging Face was used

The Hugging Face side of the campaign worked differently. Attackers used repositories to host malicious files or support multi-stage infections.

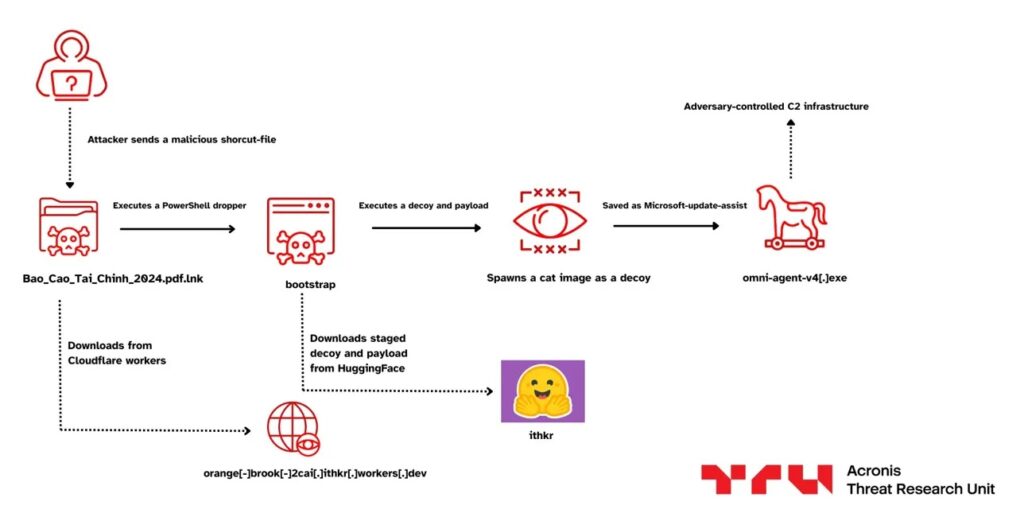

One tracked cluster, called ITHKRPAW, targeted Vietnamese financial organizations in January. The infection chain used a malicious LNK file to reach Cloudflare Workers, which served a PowerShell dropper. That dropper fetched a payload from a Hugging Face dataset repository while opening a harmless cat image as a decoy.

Acronis also tracked a FAKESECURITY campaign. In that case, a batch file contained an encoded PowerShell blob that downloaded a heavily obfuscated secondary script from a Hugging Face repository. The malware then removed Mark-of-the-Web metadata, injected shellcode into explorer.exe, and dropped a fake Windows Security file.

Why trusted AI hubs are attractive to attackers

- Developers often trust AI repositories more than unknown file-sharing websites.

- AI tools may download and execute external files during normal workflows.

- Agent skills can contain instructions that look harmless during a quick review.

- Attackers can hide commands in documentation, setup steps, or skill files.

- Malware hosted on trusted services may blend into normal developer traffic.

- AI workflows often move fast, which reduces manual security review.

What developers should check

Developers should treat third-party AI models, datasets, and skills like any other executable dependency. A useful description or high download count does not make a package safe.

OpenClaw users should audit installed skills, especially those that request external downloads, password-protected archives, encoded terminal commands, or new dependencies from unknown sources.

Teams using Hugging Face should review downloaded repositories before running scripts or loading files that can execute code. They should also avoid running unknown models or tools directly on machines that store secrets, SSH keys, API tokens, or production credentials.

Security teams should monitor these signals

- Encoded PowerShell commands launched from AI tool directories.

- Unexpected curl, bash, PowerShell, or cmd activity after installing an AI skill.

- OpenClaw skills that ask users to install password-protected archives.

- Unexpected process injection into explorer.exe.

- New Windows Defender exclusions created without approval.

- Suspicious scheduled tasks created after installing AI extensions.

- Connections to 91.92.242[.]30 or velvet-parrot[.]com.

- Downloads from unverified GitHub releases linked inside skill instructions.

- Hugging Face repositories used as staging points for non-AI executables.

How organizations can reduce exposure

Organizations should create a clear approval process for AI models, datasets, skills, and plugins. Developers should not install third-party AI components into trusted environments without review.

Application control can also help. AI agents should not have permission to run arbitrary scripts, spawn shell commands, change Defender settings, or download binaries unless a specific workflow requires it.

Security teams should also add AI supply chain checks to existing software supply chain programs. The same rules used for npm, PyPI, GitHub, and container images should now apply to AI hubs and agent marketplaces.

Summary

- Acronis found active malware distribution through Hugging Face and ClawHub.

- Researchers identified 575 malicious OpenClaw skills across 13 ClawHub developer accounts.

- The largest account, hightower6eu, published 334 malicious skills.

- Attackers used indirect prompt injection, encoded commands, password-protected archives, process injection, and persistence techniques.

- Hugging Face repositories were used as payload hosting and staging points in separate malware campaigns.

- Developers should treat AI models, datasets, and agent skills as untrusted code until reviewed.

FAQ

The research describes platform abuse, not a full platform breach. Attackers used trusted AI ecosystems to host or distribute malicious files and instructions.

Acronis reported several payload types, including trojans, cryptominers, infostealers, loaders, RATs, and AMOS Stealer for macOS.

Indirect prompt injection happens when attackers hide instructions inside content an AI system reads. The AI may then follow those instructions without the user noticing.

OpenClaw skills are extensions that teach the OpenClaw AI agent how to perform specific tasks. Since they can influence what the agent does, malicious skills can create serious security risks.

Read our disclosure page to find out how can you help VPNCentral sustain the editorial team Read more

User forum

0 messages