Google Says Hackers Used AI to Build a Zero-Day 2FA Bypass Exploit

Google says it has identified a cybercrime group using a zero-day exploit that it believes was developed with AI. The exploit targeted a popular open-source web-based system administration tool and could bypass two-factor authentication after the attacker already had valid user credentials.

The attack did not reach mass exploitation. Google Threat Intelligence Group said it worked with the impacted vendor to disclose the vulnerability and disrupt the campaign before the group could use it at scale.

Access content across the globe at the highest speed rate.

70% of our readers choose Private Internet Access

70% of our readers choose ExpressVPN

Browse the web from multiple devices with industry-standard security protocols.

Faster dedicated servers for specific actions (currently at summer discounts)

The finding matters because it shows how quickly AI is moving from a support tool into active vulnerability research and exploit development. Attackers are no longer just using chatbots to write phishing emails or clean up code. They are testing AI against real software flaws.

Why Google believes AI helped build the exploit

Google said the exploit was written in Python and showed several signs of AI assistance. The code included many educational docstrings, a hallucinated CVSS score, detailed help menus, and a clean textbook-style structure often associated with large language model output.

The vulnerability itself also stood out. It was not a common memory corruption bug or a simple input validation problem. Google described it as a high-level logic flaw caused by a hardcoded trust assumption in the 2FA enforcement process.

That type of weakness can be hard for traditional scanning tools to find. Static analyzers and fuzzers often look for crashes, dangerous data flows, or unsafe inputs. AI models can sometimes reason through intended behavior and spot contradictions in business logic.

Key facts at a glance

| Item | Details |

|---|---|

| Main finding | Google identified a zero-day exploit it believes was developed with AI |

| Target | A popular open-source web-based system administration tool |

| Exploit type | 2FA bypass |

| Requirement | The attacker still needed valid user credentials |

| Exploit language | Python |

| Mass exploitation | Disrupted before it could happen |

| Gemini involvement | Google said it does not believe Gemini was used to build this exploit |

Attackers are using AI across more of the hacking workflow

Google’s report said threat actors linked to China and North Korea have also shown strong interest in using AI for vulnerability discovery. Some groups used expert-style prompts to push AI models into acting like senior security auditors or binary analysis specialists.

Google also said APT45 sent thousands of repetitive prompts to analyze CVEs and validate proof-of-concept exploits. That kind of automation can help attackers scale research that would otherwise take far more manual effort.

The report also mentioned actors experimenting with tools such as OpenClaw and OneClaw in controlled testing environments. The goal appears to be improving exploit reliability before attackers deploy payloads in real operations.

PromptSpy shows how malware can use AI during execution

The report also highlighted PromptSpy, an Android backdoor first documented by ESET. ESET described it as the first known Android malware family to abuse generative AI in its execution flow.

PromptSpy uses Google Gemini to interpret on-screen elements and receive instructions for device actions. ESET said the malware can use Gemini to help keep the malicious app pinned in the recent apps list, making it harder for users to close.

Google’s later analysis found a module named GeminiAutomationAgent inside the malware. The module can send the device’s visible interface structure to Gemini and receive structured commands such as clicks or swipes.

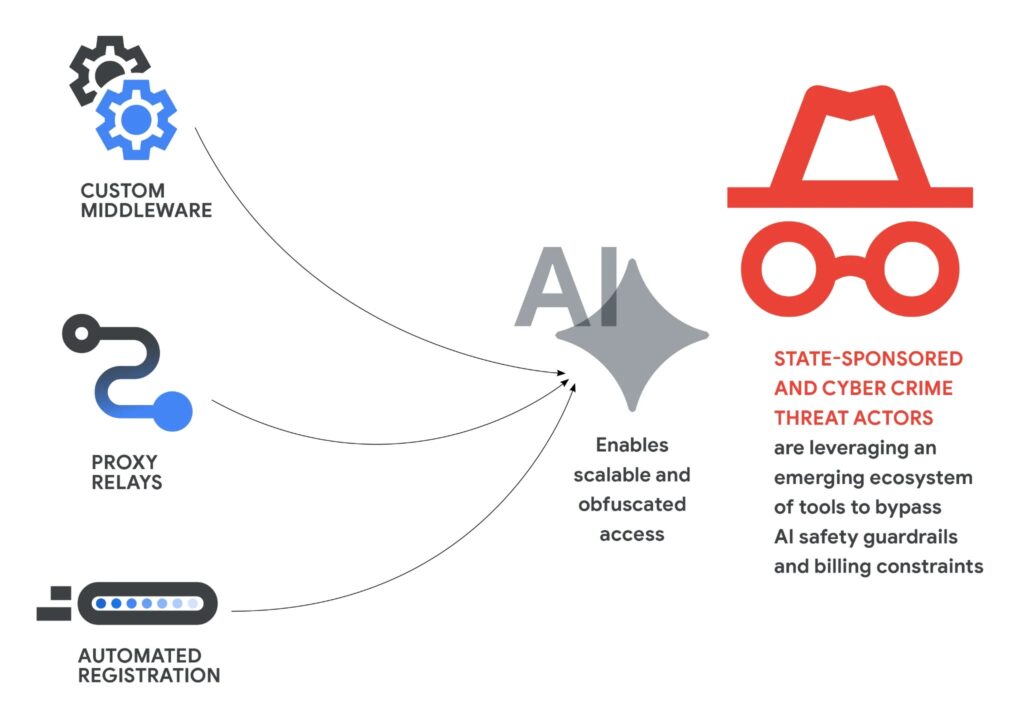

Google says attackers are also targeting AI infrastructure

Google warned that attackers are building more professional systems to access premium AI models while avoiding limits. These include proxy relays, account-pooling tools, and automated registration pipelines.

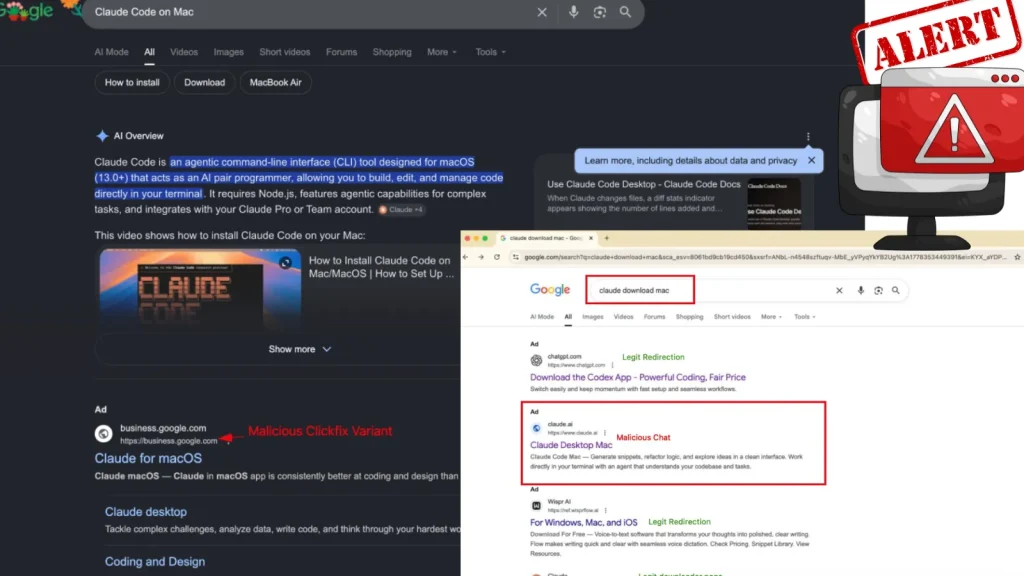

The report also said supply chain attacks are starting to target AI environments and software dependencies. Google named TeamPCP, also tracked as UNC6780, as an actor tied to compromises affecting projects linked to Trivy, Checkmarx, LiteLLM, and BerriAI.

These attacks matter because AI tools often hold API keys, cloud credentials, GitHub tokens, and integration secrets. Once attackers compromise that layer, they may gain a path into broader enterprise systems.

What security teams should do now

- Review internet-facing administration tools that rely on 2FA logic.

- Check whether 2FA bypass attempts appear in authentication logs.

- Audit GitHub tokens, CI/CD secrets, and AI service API keys.

- Limit automated dependency updates in sensitive production systems.

- Monitor AI tools used by developers for unusual account activity.

- Train security teams to review business logic flaws, not just code-level bugs.

AI is now part of both offense and defense

Google said attackers continue to industrialize the use of AI, but defenders are also using the same technology to find and fix flaws faster. The company pointed to Big Sleep, its AI agent for vulnerability discovery, and CodeMender, an experimental agent designed to patch code vulnerabilities.

This creates a faster security race. Attackers can use AI to find logic flaws, generate malware support code, and automate reconnaissance. Defenders can use AI to inspect code, detect abuse, and respond before a campaign spreads.

The main lesson is clear for businesses. AI-assisted attacks are no longer theoretical. Security teams now need to treat AI tools, AI credentials, developer workflows, and software dependencies as part of the attack surface.

FAQ

Yes. Google said it identified a threat actor using a zero-day exploit that it believes was developed with help from an AI model.

Yes. Google said the Python exploit could bypass 2FA in a popular open-source web-based system administration tool, but the attacker still needed valid credentials.

No. Google said it does not believe Gemini was used to develop this exploit.

No. Google said it worked with the impacted vendor and disrupted the planned mass exploitation campaign.

Summary

- Google identified a zero-day exploit it believes was developed with AI.

- The exploit could bypass 2FA in an unnamed open-source web administration tool.

- The campaign was disrupted before mass exploitation.

- Google said Gemini was not used to build the zero-day exploit.

- PromptSpy shows how malware can use AI during live device interaction.

Read our disclosure page to find out how can you help VPNCentral sustain the editorial team Read more

User forum

0 messages